Earlier this year, I discovered there wasn’t really a name for the thing I wanted to talk about. I wanted a word or phrase that includes journalism, social media, search engines, libraries, Wikipedia, and parts of academia, the idea of all these things as a system for knowledge and communication. But there is no such word. Nonetheless, this is an essay asking what all this stuff should do together.

What I see here is an ecosystem. There are narrow real-time feeds such as expertly curated Twitter accounts, and big general reference works like Wikipedia. There are armies of reporters working in their niches, but also colonies of computer scientists. There are curators both human and algorithmic. And I have no problem imagining that this ecosystem includes certain kinds of artists and artworks. Let’s say it includes all public acts and systems which come down to one person trying to tell another, “I didn’t just make this up. There’s something here of the world we share.”

I asked people what to call it. Some said “media.” That captures a lot of it, but I’m not really talking about the art or entertainment aspects of media. Also I wanted to include something of where ideas come from, something about discussions, collaborative investigation, and the generation of new knowledge. Other people said “information” but there is much more here than being informed. Information alone doesn’t make us care or act. It is part of, but only part of, what it means to connect to another human being at a distance. Someone else said “the fourth estate” and this is much closer, because it pulls in all the ideas around civic participation and public discourse and speaking truth to power, loads of stuff we generally file under “democracy.” But the fourth estate today means “the press” and what I want to talk about is broader than journalism.

I’m just going to call this the “digital public sphere”, building on Jürgen Habermas’ idea of a place for the discussion of shared concerns, public yet apart from the state. Maybe that’s not a great name — it’s a bit dry for my taste — but perhaps it’s the best that can be done in three words, and it’s already in use as a phrase to refer to many of the sorts of things I want to talk about. “Public sphere” captures something important, something about the societal goals of the system, and “digital” is a modifier that means we have to account for interactivity, networks, and computation. Taking inspiration from Michael Schudson’s essay “Six or seven things that news can do for democracy,” I want to ask what the digital public sphere can do for us. I think I see three broad categories, which are also three goals to keep in mind as we build our institutions and systems.

1. Information. It should be possible for people to find things out, whatever they want to know. Our institutions should help people organize to produce valuable new knowledge. And important information should automatically reach each person at just the right moment.

2. Empathy. The vast majority of people in the world, we will only know through media. We must strive to represent the “other” to each-other with compassion and reality. We can’t forget that there are people on the other end of the wire.

3. Collective action. What good is public deliberation if we can’t eventually come to a decision and act? But truly enabling the formation of broad agreement also requires that our information systems support conflict resolution. In this age of complex overlapping communities, this role spans everything from the local to the global.

Each of these is its own rich area, and each of these roles already cuts across many different forms and institutions of media.

Information

I’d like to live in a world where it’s cheap and easy for anyone to satisfy the following desires:

- “I want to learn about X.”

- “How do we know that about X?”

- “What are the most interesting things we don’t know about X?”

- “Please keep me informed about X.”

- “I think we should know more about X.”

- “I know something about X and want to tell others.”

These desires span everything from mundane queries (“what time does the store close?”) to complex questions of fact (“what will be the effects of global climate change?”) And they apply at all scales; I might have a burning desire to know how the city government is going to deal with bike lanes, or I might be curious about the sum total of humanity’s knowledge of breast cancer — everything we know today, plus all the good questions we can’t yet answer. Different institutions exist to address each of these needs in various ways. Libraries have historically served the need to answer specific questions, desires number #1 and #2, but search engines also do this. Journalism strives to keep people abreast of current events, the essence of #4. Academia has focused on how we know and what we don’t yet know, which is #2 and #3.

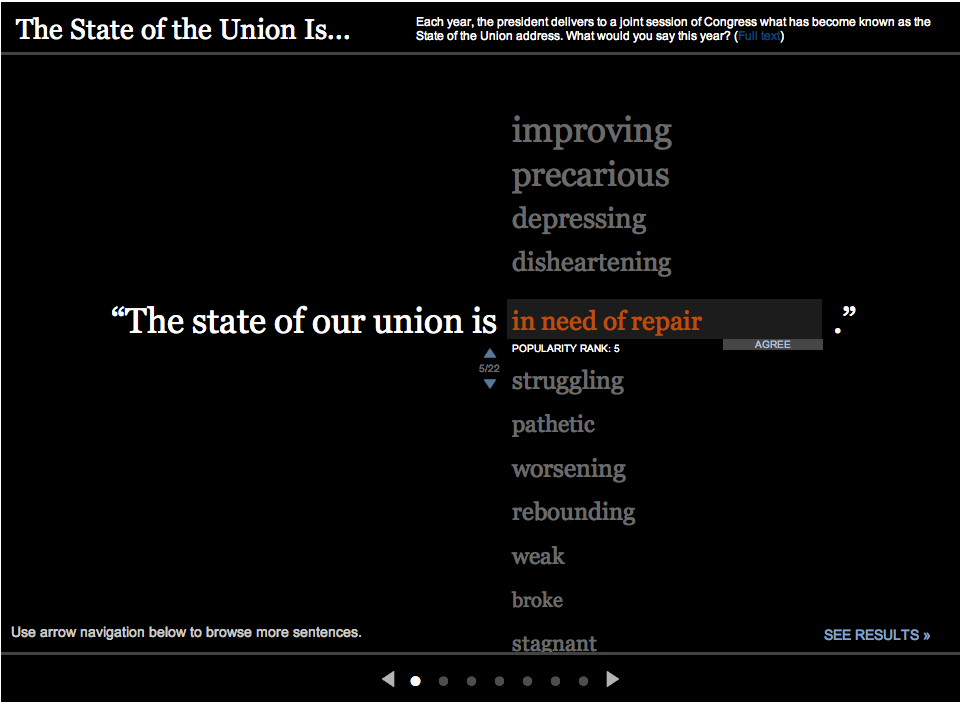

This list includes two functions related to the production of new knowledge, because it seems to me that the public information ecosystem should support people working together to become collectively smarter. That’s why I’ve included #5, which is something like casting a vote for an unanswered question, and #6, the peer-to-peer ability to provide an answer. These seem like key elements in the democratic production of knowledge, because the resources which can be devoted to investigating answers are limited. There will always be a finite number of people well placed to answer any particular question, whether those people are researchers, reporters, subject matter experts, or simply well-informed. I like to imagine that their collective output is dwarfed by human curiosity. So efficiency matters, and we need to find ways to aggregate the questions of a community, and route each question to the person or people best positioned to find out the answer.

In the context of professional journalism, this amounts to asking what unanswered questions are most pressing to the community served by a newsroom. One could devise systems of asking the audience (like Quora and StackExchange) or analyze search logs (ala Demand Media.) That newsrooms don’t frequently do these things is, I think, an artifact of industrial history — and an unfilled niche in the current ecosystem. Search engines know where the gaps between supply and demand lie, but they’re not in the business of researching new answers. Newsrooms can produce the supply, but they don’t have an understanding of the demand. Today, these two sides of the industry do not work together to close this loop. Some symbiotic hybrid of Google and The Associated Press might be an uncannily good system for answering civic questions.

When new information does become available, there’s the issue of timing and routing. This is #4 again, “please keep me informed.” Traditionally, journalism has answered the question “who should know when?” with “everyone everything as fast as possible” but this is ridiculous today. I really don’t want my phone to vibrate for every news article ever written, which is why only “important” stories generate alerts. But taste and specialization dictate different definitions of “important” for each person, and old answers delivered when I need them might be just as valuable as new information delivered hot and fresh. Google is far down this track with its thinking on knowing what I want before I search for it.

Empathy

There is no better way to show one person to another, across a distance, than the human story. These stories about other people may be informative, sure, but maybe their real purpose is to help us feel what it is like to be someone else. This is an old art; one journalist friend credits Homer with the last major innovation in the form.

But we also have to show whole groups to each other, a very “mass media” goal. If I’ve never met a Cambodian or hung out with a union organizer, I only know what I see in the media. How can and should entire communities, groups, cultures, races, interests or nations be represented?

A good journalist, anthropologist, or writer can live with a community for a while, observing and learning, then articulate generalizations. This is important and useful. It’s also wildly subjective. But then, so is empathy. Curation and amplification can also be empathetic processes: someone can direct attention to the genuine voices of a community. This “don’t speak, point” role has been articulated by Ethan Zuckerman and practiced by Andy Carvin.

But these are still at the level of individual stories. Who is representative? If I can only talk to five people, which five people should I know? Maybe a human story, no matter how effective, is just a single sample in the sense of a tiny part standing for the whole. Turning this notion around, making it personal, I come to an ideal: If I am to be seen as part of some group, then I want representations of that group to include me in some way. This is an argument that mass media coverage of a community should try to account for every person in that community. This is absurd in practical terms, but it can serve as a signpost, a core idea, something to aim for.

Fortunately, more inclusive representations are getting easier. Most profoundly, the widespread availability of peer-to-peer communication networks makes it easier than ever for a single member of a community to speak and be heard widely.

We also have data. We can compile the demographics of social movements, or conduct polls to find “public opinion.” We can learn a lot from the numbers that describe a particular population, which is why surveys and censuses persist. But data are terrible at producing the emotional response at the core of empathy. For most people, learning that 23% of the children in some state live in poverty lacks the gut-punch of a story about a child who goes hungry at the end of every month. In fact there is evidence that making someone think analytically about an issue actually makes them less compassionate.

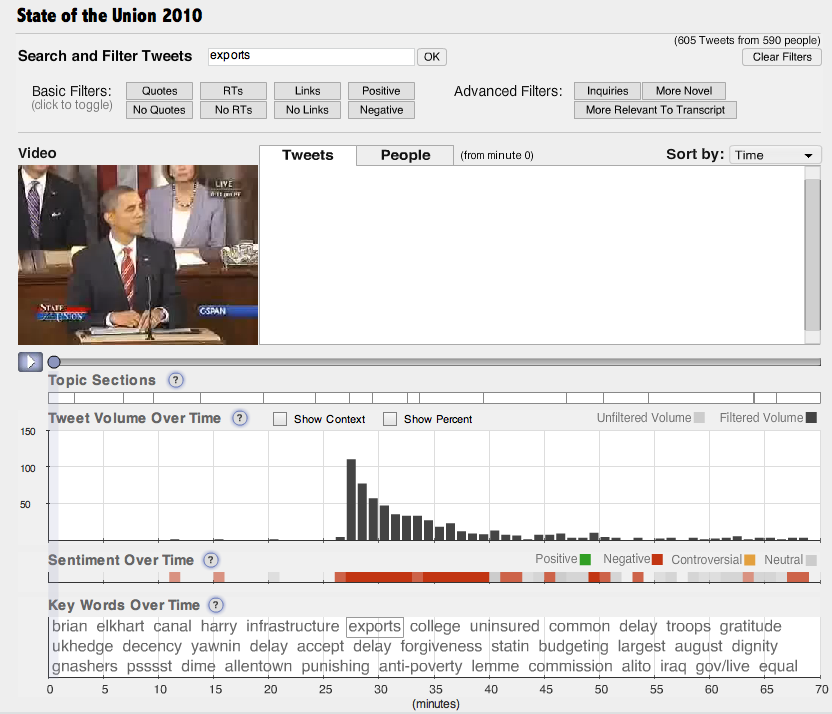

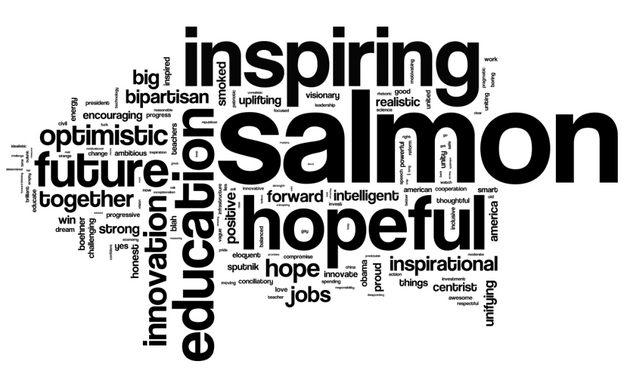

The best reporting might combine human stories with broader data. I am impressed by CNN’s interactive exploration of American casualties in Iraq, which links mass visualization with photographs and stories about each individual. But that piece covers a comparatively small population, only a few thousand people. There are emerging techniques to understand much larger groups, such as by visualizing the data trails of online life, all of the personal information that we leave behind. We can visualize communities, using aggregate information to see the patterns of human association at all scales. I suspect that mass data visualization represents a fundamentally new way of understanding large groups, a way that is perhaps more inclusive than anecdotes yet richer than demographics. Also, visualization forces us into conversations about who exactly is a member of the community in question, because each person is either included in a particular visualization or not. Drawing such a hard boundary is often difficult, but it’s good to talk about the meanings of our labels.

And yet, for all this new technology, empathy remains a deeply human pursuit. Do we really want statistically unbiased samples of a community? My friend Quinn Norton says that journalism should “strive to show us our better selves.” Sometimes, what we need is brutal honesty. At other times, what we need is kindness and inspiration.

Collective action

What a difficult challenge advances in communication have become in recent decades. On the one hand they are definitely bringing us closer to each other, but are they really bringing us together?

– Ryszard Kapuściński, The Other

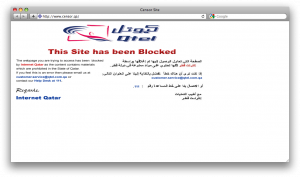

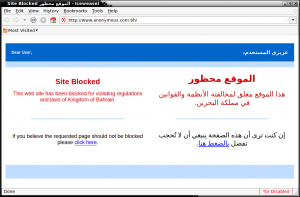

I am sensitive to the idea of filter bubbles and concerns about the fragmentation of media, the worry that the personalization of information will create a series of insular and homogenous communities, but I cannot abide the implied nostalgia for the broadcast era. I do not see how one-size-fits-all media can ever serve a diverse and specialized society, and so: let a million micro-cultures bloom! But I do see a need for powerful unifying forces within the public sphere, because everything from keeping a park clean to tackling global climate change requires the agreement and cooperation of a community.

We have long had decision making systems at all scales — from the neighborhood to the United Nations — and these mechanisms span a range from very lightweight and informal to global and ritualized. In many cases decision-making is built upon voting, with some majority required to pass, such as 51% or 66%. But is a vicious, hard-fought 51% in a polarized society really the best we can do? And what about all the issues that we will not be voting on — that is to say, most of them?

Unfortunately, getting agreement among even very moderate numbers of people seems phenomenally difficult. People disagree about methods, but in a pluralistic society they often disagree even more strongly about goals. Sometimes presenting all sides with credible information is enough, but strongly held disagreements usually cannot be resolved by shared facts; experimental work shows that, in many circumstances, polarization deepens with more information. This is the painful truth that blows a hole in ideas like “informed public” and “deliberative democracy.”

Something else is needed here. I want to bring the field of conflict resolution into the digital public sphere. As a named pursuit with its own literature and community, this is a young subject, really only begun after World War II. I love the field, but it’s in its infancy; I think it’s safe to say that we really don’t know very much about how to help groups with incompatible values find acceptable common solutions. We know even less about how to do this in an online setting.

But we can say for sure that “moderator” is an important role in the digital public sphere. This is old-school internet culture, dating back to the pre-web Usenet days, and we have evolved very many tools for keeping online discussions well-ordered, from classic comment moderation to collaborative filtering, reputation systems, online polls, and various other tricks. At the edges, moderation turns into conflict resolution, and there are tools for this too. I’m particularly intrigued by visualizations that show where a community agrees or disagrees along multiple axes, because the conceptually similar process of “peace polls” has had some success in real-world conflict situations such as Northern Ireland. I bet we could also learn from the arduously evolved dispute resolution processes of Wikipedia.

It seems to me that the ideal of legitimate community decision making is consensus, 100% agreement. This is very difficult, another unreachable goal, but we could define a scale from 51% agreement to 100%, and say that the goal is “as consensus as possible” decision making, which would also be “as legitimate as possible.” With this sort of metric — and always remembering that the goal is to reach a decision on a collective action, not to make people agree for the sake of it — we could undertake a systematic study of online consensus formation. For any given community, for any given issue, how fragmented is the discourse? Do people with different opinions hang out in different places online? Can we document examples of successful and unsuccessful online consensus formation, as has been done in the offline case? What role do human moderators play, and how can well-designed social software contribute? How do the processes of online agreement and disagreement play out at different scales and under different circumstances? How we do know when the process has converged to a “good” answer, and when it has degraded into hegemony or groupthink? These are mostly unexplored questions. Fortunately, there’s a huge amount of related work to draw on: voting systems and public choice theory, social network analysis, cognitive psychology, information flow and media ecosystems, social software design, issues of identity and culture, language and semiotics, epistemology…

I would like conflict resolution to be an explicit goal of our media platforms and processes, because we cannot afford to be polarized and grid-locked while there are important collective problems to be solved. We may have lost the unifying narrative of the front page, but that narrative was neither comprehensive nor inclusive: it didn’t always address the problems of concern to me, nor did it ask me what I thought. Effective collective action, at all relevant scales, seems a better and more concrete goal than “shared narrative.” It is also an exceptionally hard problem — in some ways it is the problem of democracy itself — but there’s lots to try, and our public sphere must be designed to support this.

Why now?

I began writing this essay because I wanted to say something very simple: all of these things — journalism, search engines, Wikipedia, social media and the lot — have to work together to common ends. There is today no one profession which encompasses the entirety of the public sphere. Journalism used to be the primary bearer of these responsibilities — or perhaps that was a well-meaning illusion sprung from near monopolies on mass information distribution channels. Either way, that era is now approaching two decades gone. Now what we have is an ecosystem, and in true networked fashion there may not ever again be a central authority. From algorithm designers to dedicated curators to, yes, traditional on-the-scene pro journalists, a great many people in different fields now have a part in shaping the digital public sphere. I wanted try to understand what all of us are working toward. I hope that I have at least articulated goals that we can agree are important.