We live in a cacaphony of news, but most of it is just echoes. Generating news is expensive; collecting it is not. This is the central insight of the news aggregator business model, be it a local paper that runs AP Wire and Reuters stories between ads, or web sites like Topix, Newser, and Memeorandum, or for that matter Google News. None of these sites actually pay reporters to research and write stories, and professional journalism is in financial crisis. Meanwhile there are more bloggers, but even more re-blogging. Is there more or less original information entering the web this year than last year? No one knows.

A computer could answer this question. A computer could trace the first, original source of any particular article or statement. The effect would be like donning special glasses in the hall of mirrors that is current news coverage, being able to spot the true sources without distraction from reflections. The required technology is nearly here.

This is more than geekery if you’re in a position of needing to know the truth of something. Last week I was researching a man named Michael D. Steele, after reading a newly leaked document containing his name. Steele gained fame as one of the stranded commanders in Black Hawk Down, but several of his soldiers later killed three unarmed Iraqi men. I rapidly discovered many news stories (1, 2, 3, 4, 5, 6, 7, etc.) claiming that Steele had ordered his men to “kill all military-age males.” This is a serious accusation, and widely reprinted — but no number of news articles, blog posts, and reblogs can make a false statement more true. I needed to know who first reported this statement, and its original source.

First I had to deal with straight-up duplication of stories. The first reference above is an Assoicated Press (AP) story which included the quote, saying it was from “sworn statements obtained by the Associated Press.” The subsequent MSNBC article is in fact just a reprint of the AP story. There were other reprints, each on a different outlet but credited to AP in the standard practice of newswire syndication. I can’t argue with the ethics or legality of the practice, but this type of mirroring does amplify a story’s apparent significance on the web.

The second level of indirection is the hyperlink. One of the references above is an ABC News Blog story which refers to the AP article, linking to it and one other related story. Although the text is new, this article is nothing more than a rehashing of facts presented elsewhere. For research or authentication purposes, it’s basically worthless.

Finally, there is the uncredited reblog, exemplified by a post on the blog Caffienated Politics where the key phrase is repeated without links or attribution. Even the article on CounterPunch — headlined “Kill All Military Age Men” — does not provide any sources at all.

In my manual analysis, only the AP article and a piece in the New York Times were original research. Out of the dozens (or hundreds?) of articles, blog posts, and screaming headlines, only two people/organizations had actually bothered to obtain original information. This doesn’t mean that Michael D. Steele did not, in fact, order his troops to “kill all military-age males.” In fact, the NYT article names four soldiers under his command who testified, on August 2nd in a military Article 32 hearing, that he did. This is what makes the statement reliable, not the ten thousand reblogs.

I shouldn’t have to do this sort of analysis by hand.

We’re getting there. In 2007, Google News introduced a feature that elimates duplicated stories from its default results display. This is simple elimination of textual duplicates, a reaction to newswire syndication. Slightly more advanced algorithms can be used to detect and cull near duplicates, such as the techniques Google has long used for web pages (near duplicates shouldn’t count as more than one item in the results list.)

I want more. For any particular paragraph, phrase or statement, I want to know exactly who said it first and where they got it from. I want automatic culling of cut-and-paste “reporting” and unattributed quotations (and plagiarism.) I want my computer to automatically track back through hyperlinks when they’re present, and do deep textual analysis to determine who references whom even when the content is unattributed. The software should also analyze publication dates, where available, to see who said what first.

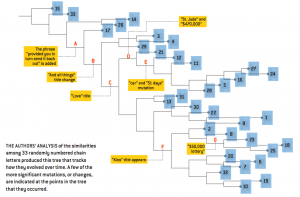

What I want is the phylgenetic tree of any particular story or post, a graph which shows which articles “evolved” from which ancestors, and therefore which article or articles constitute the originals, the raw input of real-world information into the ‘net. In fact, phylogenetic trees have already been applied to documents. In an article published in Scientific American in 2003, the authors analyzed 33 different versions of a chain letter with algorithms originally designed to track evolutionary changes in genetic sequences, and were able to deduce which was the original version.

This type of analysis only works with identical snippets of text — copied articles that are modified, paragraphs cut and pasted, quotations. More sophisticated text analysis algorithms will be able to handle paraphrased reports, where an article is rewritten without adding substantial new information. General semantic analysis of news stories is coming, even for audio and video, at which point it will be possible to track a single statement through all its rewritings and rewordings as it passes from article to article to blog. Combined with information from hyperlinks and posting times, we will be able to construct a “source tree” like the one above for any given story.

We’ll finally be able to tell how much content we actually have, and where it came from. (We could even track the evolution of memes.)

It’s not that repeated coverage and discussion of the same story adds nothing. A major story should be covered by multiple outlets, and quotation, paraphrasing, and reblogging is how interesting or important stories spread; telling others about what we know is fundamentally how societal awareness comes to be. However, yelling something louder doesn’t make it more significant, or more true. In the balance between awareness and vacuous repetition, I refer to Ethan Zuckerman’s web 2.0 maxim: don’t speak, point.

But I don’t want to set guidelines for authors. I want software that is smart enough to parse the anarchy of the web and tell me what is a reflection and what is not, and I want everyone else to have this software too. I want to be able to see the source tree for every article or fact of interest to me, and I want filtered views on my news aggregators that show only the primary reports. It’s not important (or remotely realistic) that every reader scrutinize the sources for every article, but it is important that it is possible to do so easily. The interested ameteur should be able to trace statements in a few clicks; this should be a deterrent to the spreading of un-sourced lies as truth, and a stumbling block for would-be propaganda campaigns. In traditional journalism, the tracking and validation of sources was the responsibility of the media monopolies. If we are witnessing the dawning of the era where we all get to have our say — if the infosphere is going to be radically democratized and expanded a million fold — then it is suddenly the responsibility of all of us in general to monitor the quality of our information. For this we need tools.

UPDATE (October 2010): Since I wrote this, the Memetracker project demonstrated a whole-web news tracking service that has much of the capability I wished for. It even works by building text mutation trees. More on Memetracker and what it means for news at the Nieman Journalism Lab. My original post also missed the significance of social networking tools for the spread of news. There is now a fascinating project that aims to detect and track the source of political smear campaigns on Twitter, the Truthy project. We’re getting there technologically speaking. Now we just need to get the technology into our everyday news reading apps.

Yes, yes, a thousand times yes.

This is something I’ve wanted in an aggregator for years! Please keep me informed; and if you want a sounding board I can describe some use cases outside of news that I’ve envisioned for such a tool. Keep me posted!

On a slight tangent – what happens when a journalist does “original research” but refuses to identify their source? How can you determine whether they just made up whatever they wished to print?

I could paraphrase most of a story crediting AP or Reuters, then add my own scintillating tidbit from “undisclosed highly-placed sources” or similar in order to attact a few extra readers. So long as they’re generic enough to not be disproved (or circulate widely through cut-n-paste before being disproved), or an accurate guess, what stops me?