The state of the union is a big pre-planned event, so it’s a great place to showcase new approaches and techniques. What do news digital news organizations do when they go all out? Here’s my roundup of online coverage Tuesday night.

Live coverage

The Huffington Post, the New York Times, the Wall Street Journal, ABC, CNN, Mashable, and many others, including even Mother Jones had live web video. But you can get live video on television, so perhaps the digitally native form of the live blog is more interesting. This can include commentary from multiple reporters, reactions from social media, link round-ups, etc. The New York Times, the Boston Globe, The Wall Street Journal, CNN, MSNBC, and many others had a live blog. The Huffington Post’s effort was particularly comprehensive, continuing well into Wednesday afternoon.

Multi-format, socially-aware live coverage is now standard, and by my reckoning makes television look meagre. But the experience is not really available on tablet and mobile yet. For example, almost all of the live video feeds were in Flash and therefore unavailable on Apple devices, as CNET reports.

As far as tools, there was some use of Coveritlive, but most live blogs seemed to be using nondescript custom software.

Visualizations

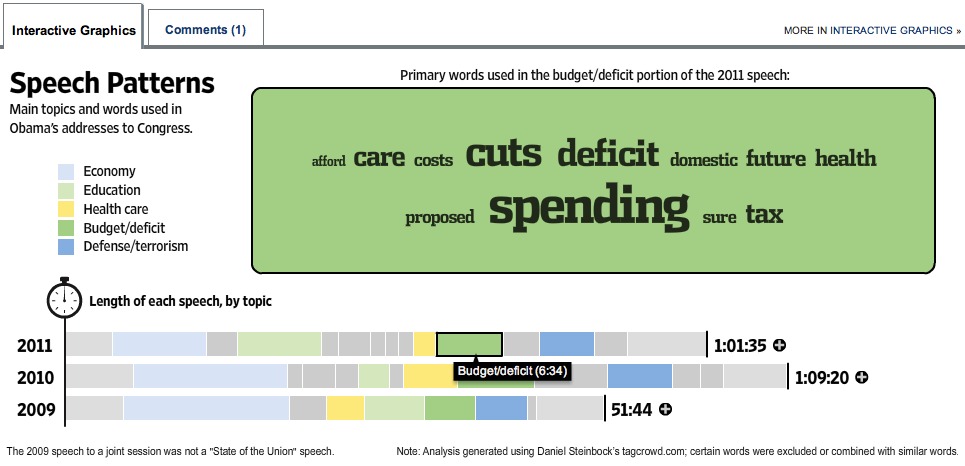

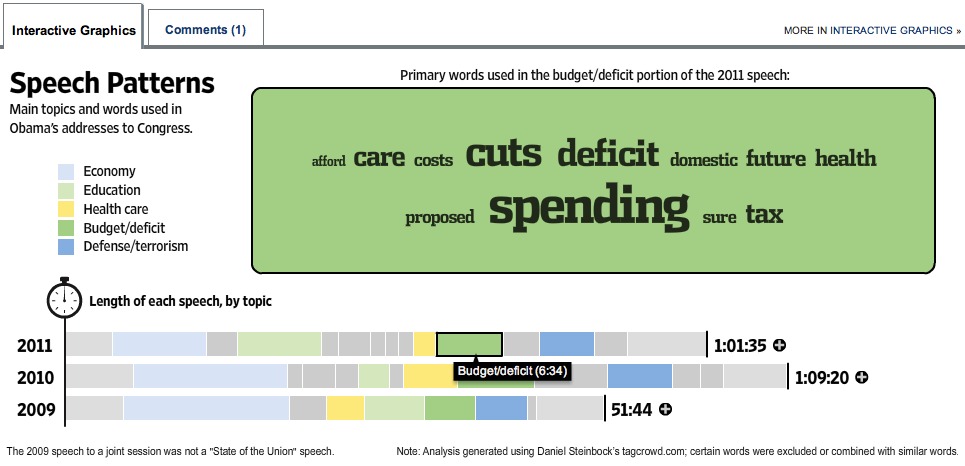

Lots of visualization love this year. But visualizations take time to create, so most of them were rooted in previously available SOTU information. The Wall Street Journal did an interactive topic and keyword breakdown of Obama’s addresses to congress since 2009, which moved about an hour after Tuesday’s speech concluded.

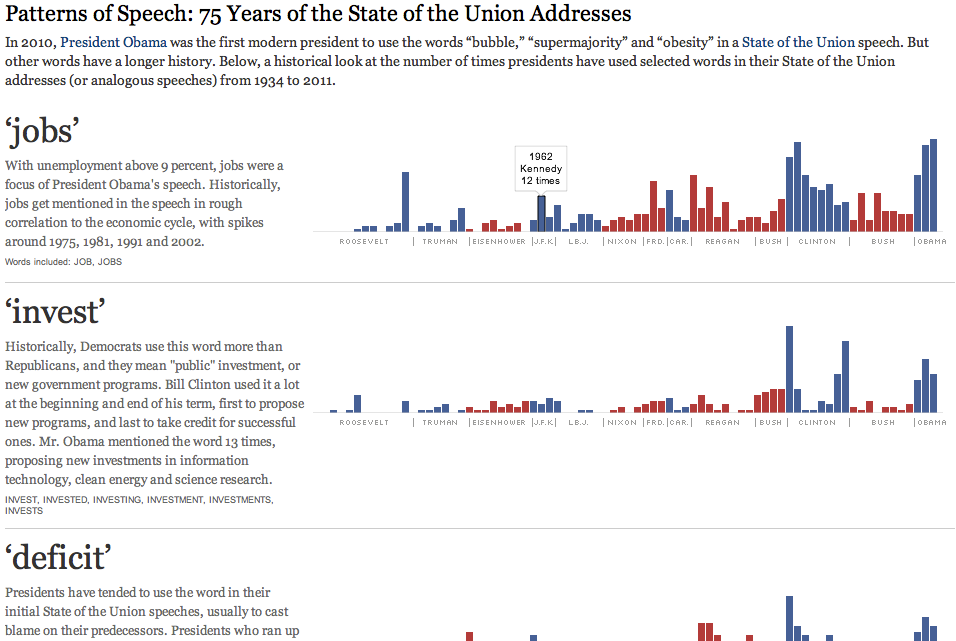

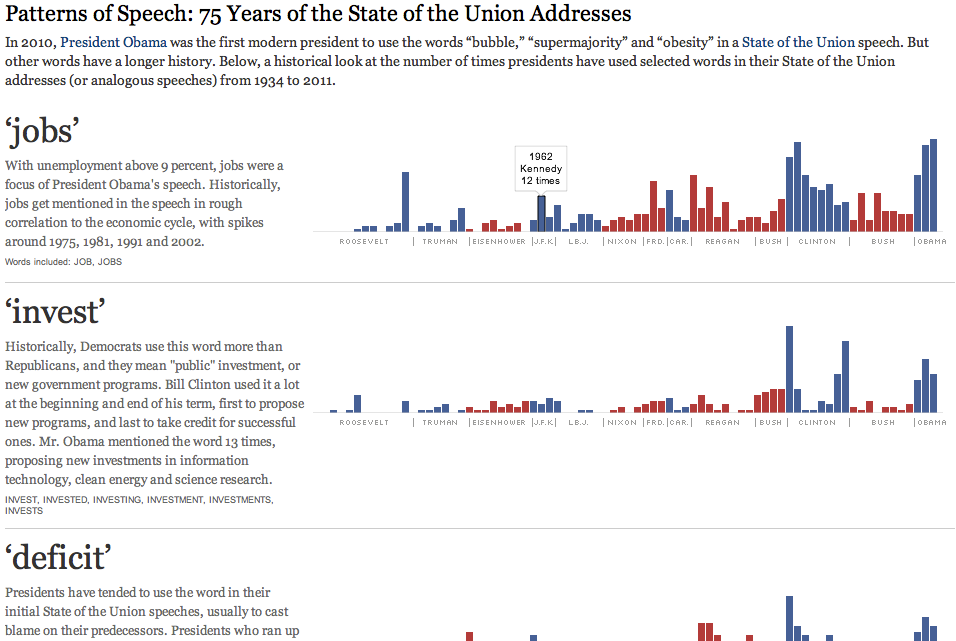

The New York Times had a snazzy graphic comparing the topics of 75 years of SOTU addresses, by looking at the rates of certain carefully chosen words. Rollovers for individual counts, but mostly a flat thing.

The Guardian Data Blog took a similar historical approach, with Wordles for SOTU speeches from Obama and seven other presidents back to Washington. Being the Data Blog, they also put the word frequencies for these speeches into a downloadable spreadsheet. It’s a huge image, definitely intended for big print pages.

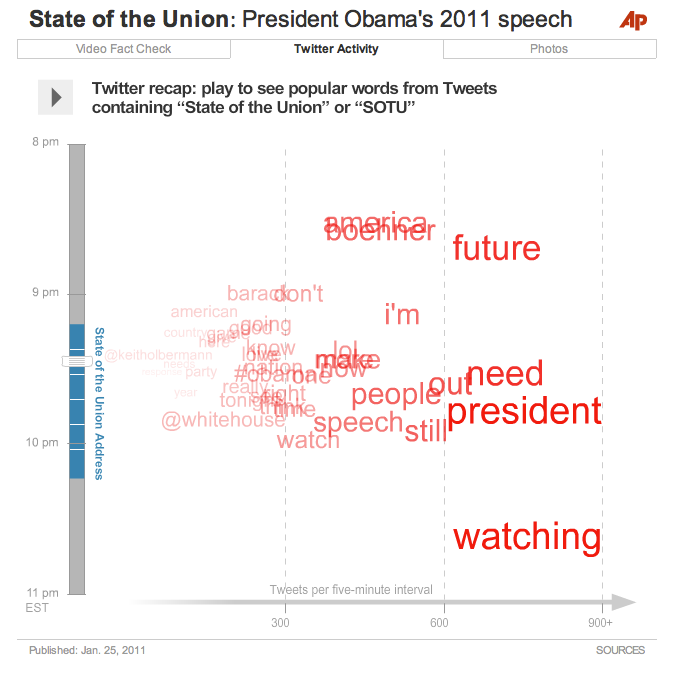

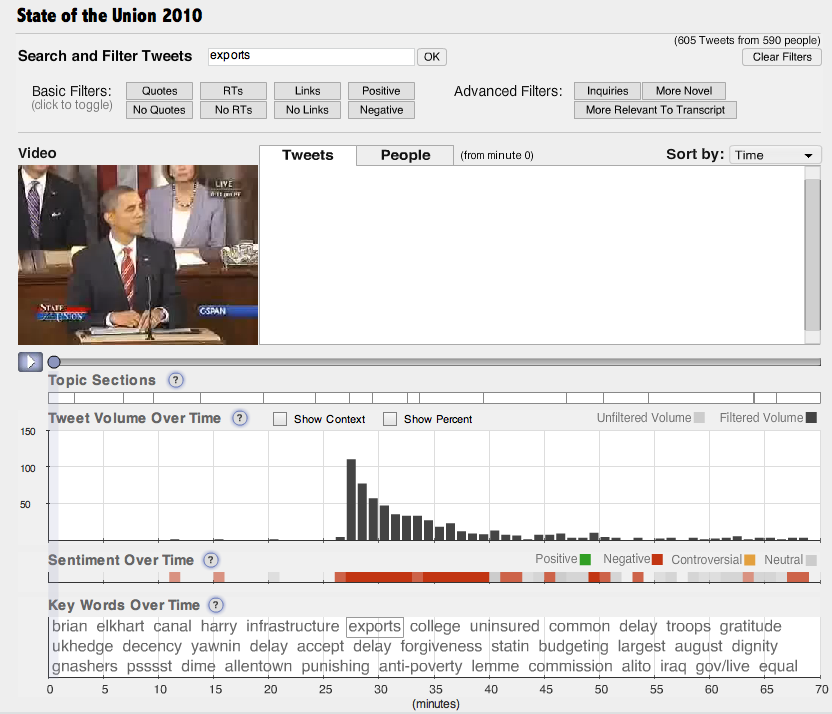

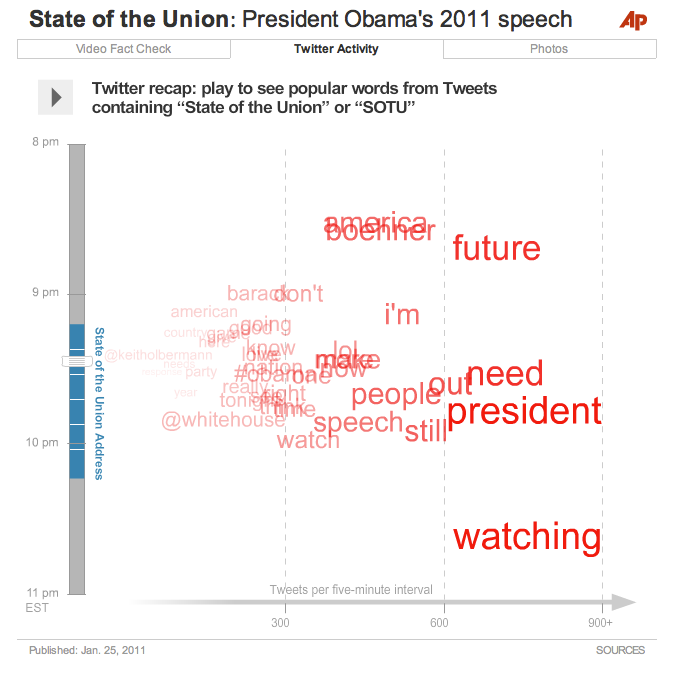

A shout-out to my AP colleagues for all their hard work on our SOTU interactive, which included the video, a fact-checked transcript, and an animated visualization of Twitter responses before, during, and after the State of the Union.

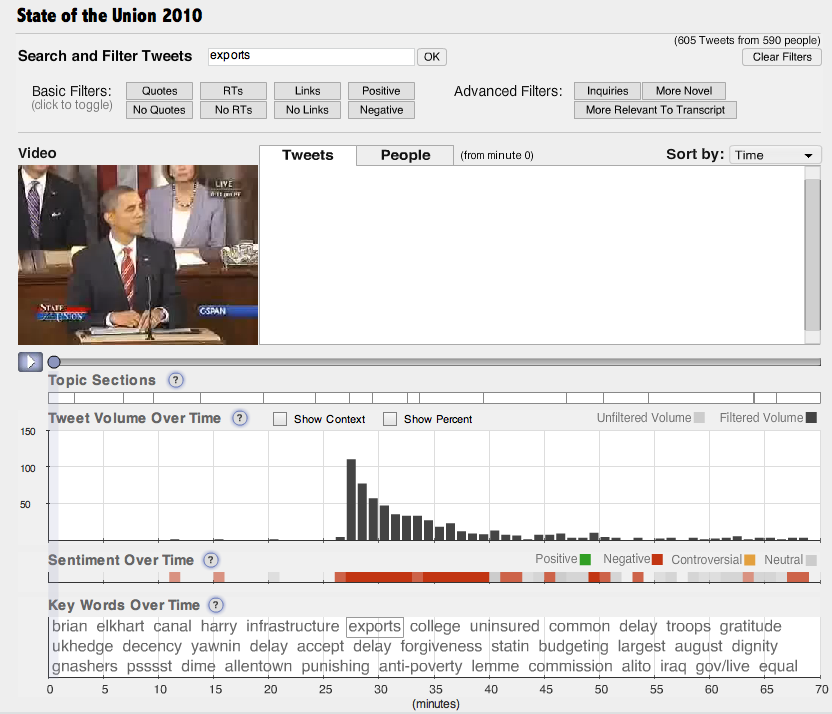

But it’s not clear what, if anything, we can actually learn from such visualizations. In terms of solid journalism content, possibly the best visualization came not from a news organization but from Nick Diakopoulos and co. at Rutgers University. Their Vox Civitas tool does filtering, search, and visualization of over 100,000 tweets captured during the address.

I find this interface a little too complex for general audience consumption — definitely a power user’s tool. But the algorithms are second to none. For example, Vox Civitas compares tweets to the text of the speech within the previous two minutes to detect “relevance,” and the automated keyword extraction — you can see the keywords at the bottom of the interface above — is based on tf-idf and seems to choose really interesting and relevant words. The interactive graph of keyword frequency over time clearly shows the sort of information that I had hoped to reveal with the AP’s visualization.

Fact Checking

A number of organizations did real-time or near real-time fact checking, as Yahoo reports. The Sunlight Foundation used itsSunlight Live system fo real-time fact checks and commentary. This platform, incorporating live video, social media monitoring, and other components is expected to be available as an open-source web app, for the use of other news organizations, by mid-2011.

The Associated Press published a long fact check piece (also integrated into the AP interactive), ABC had their own story, and CNN took a stab at it.

But the heaviest hitter was Politifact, who had a number of fact check rulings within hours and several more by Wednesday evening. These are together in a nice summary article, but as is their custom the individual fact checks are extensively documented and linked to primary sources.

Audience engagement

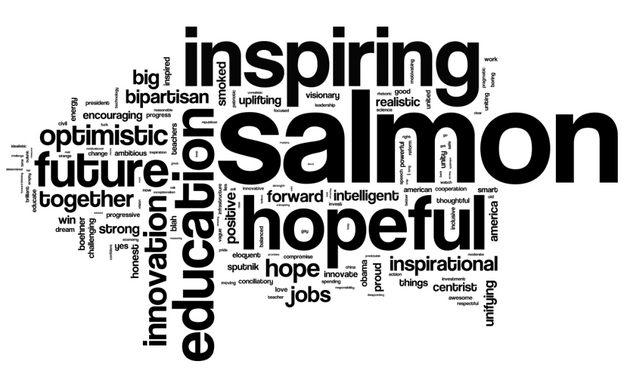

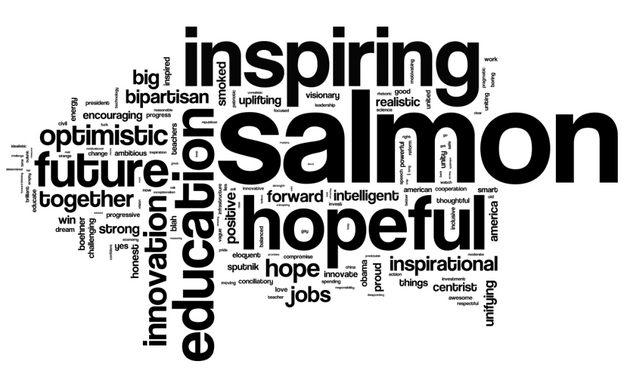

Pretty much every news organization had some SUTO action on social media, though with varying degrees of aggressiveness and creativity. Some of the more interesting efforts involved solicitation of audience responses of a specific kind. NPR asked people to describe their reaction to the state of the union in three words. This was promoted aggressively on Twitter and Facebook. They also asked for political affiliation, and split out the 4000 responses into Democratic and Republican word clouds:

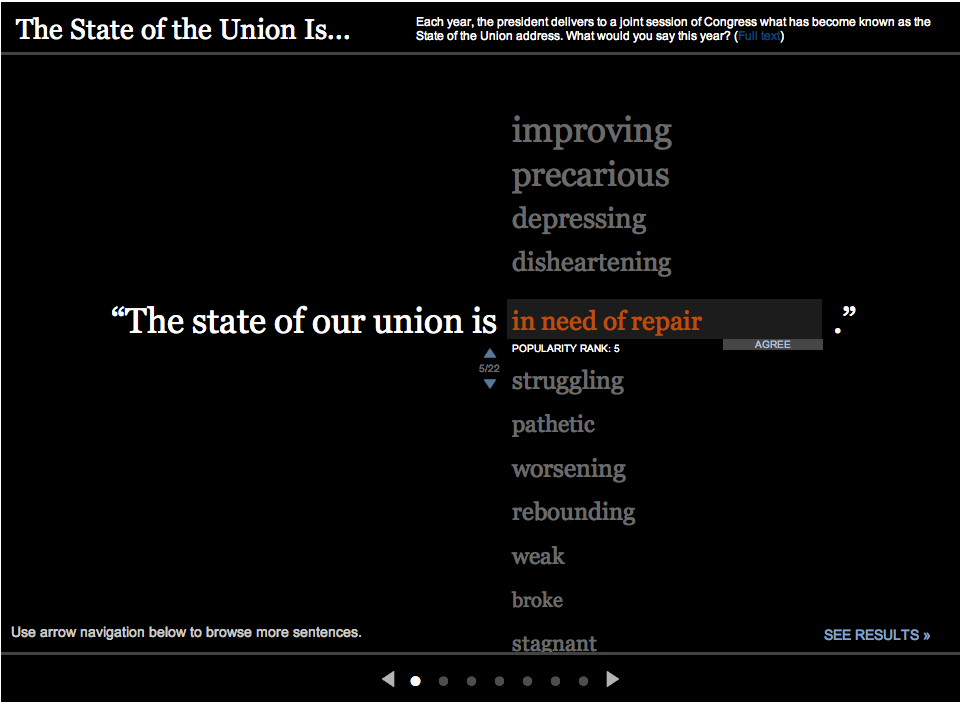

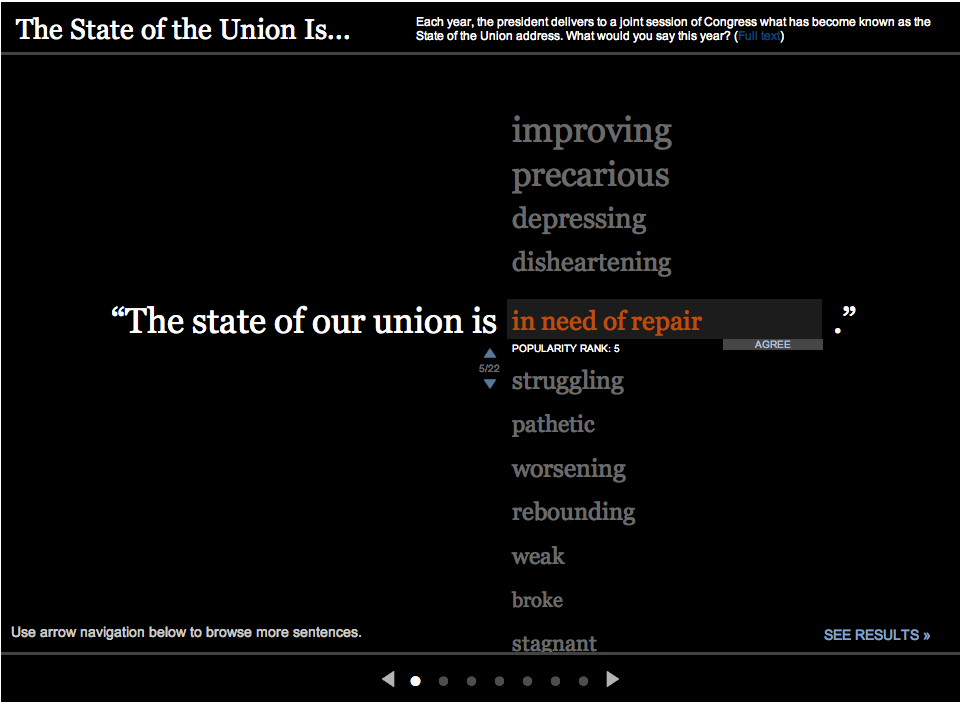

Apparently, Obama’s salmon joke went down well. The Wall Street Journal went live Tuesday morning with “The State of the Union is…” asking viewers to leave a one word answer. This was also promoted on Twitter. Their results were presented in the same interactive, as a popularity-sorted list.

Aside from this type of interactive, we saw lots of agressive social media engagement in general. The more social-media savvy organizations were all over this, promoting their upcoming coverage and responding to their audiences. As usual, the Huffington Post was pretty seriously tweeting the event, posting about updates to their live blog, etc. and going well into Wednesday morning. Perhaps inspired by NPR, they encouraged people to tweet their #3wordreaction to the speech. They also collected and highlighted reaction from teachers, Sarah Palin, etc.

But as an AP colleague of mine asked, engagement to what end? Getting people’s attention is great, but then how do we, as journalists, focus that attention in a way that makes people think or act?

The White House

No online media roundup of the SOTU would be complete without a discussion of the White House’s own efforts, including web and mobile app presences. Fortunately, Nieman Journalism Lab has done this for us. Here I’ll just add that the White House livestreamed a Q&A session in front of an audience immediately after the speech, in which White House Office of Public Engagement’s Kal Penn (aka Kumar) read questions from social media. Then Obama himself did an intervew Thursday afternoon in which he answered questions submitted as videos on YouTube.