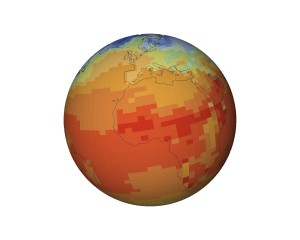

Or at least help us to understand it. Climateprediction.net is a large-scale scientific computing experiment, relying on individual computer users who donate their computer time for the simulation of tens of thousands of global warming scenarios. This is important because, lacking other Earths to experiment with, computer simulations are really the only way we can validate our existing models of climate change — and then predict the future with models we think are accurate.

The climateprediction.net project comprises three separate experiments – one to explore the model we are using, the second to see how well the models replicate past climate and the third to finally produce a forecast for 21st century climate. Each model that we distribute will be used for all three experiments.

Built upon the BOINC scientific computing framework oriignally developed for the groundbreaking SETI@Home project, Climateprediction.net relies upon hundreds of thousands of volunteer users who donate their spare computer time. All of these machines together are effectively one of the largest supercomputers in the world, and this allows previously impossible scientific studies. The Climateprediction.net scientific team can run not just one or a few climate prediction simulations, but hundreds of thousands. One study performed this way was the Seasonal Attribution Experiment:

We focus on extreme weather events that occur on a seasonal timescale, and in our current project we focus specifically on the United Kingdom floods of Autumn 2000 which occurred during the wettest autumn ever recorded, causing widespread damage and an estimated insured loss of £ 1.3 billion.

Half of the climate model simulations we run are of the Autumn 2000 period, specifically including within them the effects of human-induced climate change caused by the emission of greenhouse gases. The other half will simulate a representation of the the Autumn 2000 climate had there not been any human-induced emissions of greenhouse gases over the last century. By then comparing the results of these two simulated climates, and recording the occurrence of floods like that of Autumn 2000 in each of them, we can determine how the frequency of occurrence (or ‘risk’) of such a flood has changed, and therefore how much risk is attributable to human-induced emissions of greenhouse gases over the last century.

So far, so good. This sort of modeling has been attempted before. Here’s the fun part: because so much computing power is available, a statistical study becomes possible. Rather than looking for floods in a handful of simulated case studies, the CPDN researchers are running 10,000 simulations each of the climate with and without post-industrial greenhouse gas emissions. Each simulation is started with slightly different initial conditions, which are then amplified by the classic butterfly effect into completely different simulated histories. Not every simulation will result in floods in Britain in the Autumn of 2000; in fact, because the flood was a once-in-a-century event in any case, most of them won’t. But by counting the number of floods with and without humanity’s greenhouse gas emissions, we can determine how much our industrial activity has amplified the risk of large flood.

This is akin to an epidemiologist determining how much smoking increases the risk of lung cancer, and the massive number of Climateprediction.net simulations are like to having not just ten but 10,000 case histories. It’s a breakthrough, and it’s only one of several different Climateprediction.net experiments, which have now cumulatively run almost 400,000 different climate simulations covering 41,000,000 years of hypothetical history. It made me very happy to learn about this project; it gives me hope that climate scientists have unlimited access to one of the largest supercomputers that has ever existed, given to them freely by the citizens of (it says on the map here) 132 different countries. You too can help.

Utter crap.

You don’t think that seriously asking questions about planetary-scale human-caused damage to the environment is worthwhile, Todd?

maybe todd meant the software was utter crap? or maybe the idea to use distributed computing is crap? or maybe since the moon landing was faked it’s impossible we’d have satellites circling the flatness of earth?

The climate modeling project is important and I support it. There are many projects out there which can provide great impact on science and medicine.

I suggest checking out GridRepublic a nonprofit working in collaboration with the BOINC project to bring volunteer computing to the mainstream, inspire interest in science, and provide educational resources. There are ~50 projects to choose from using BOINC. I encourage checking them out at http://www.gridrepublic.org.