Professional journalism is supposed to be “factual,” “accurate,” or just plain true. Is it? Has news accuracy been getting better or worse in the last decade? How does it vary between news organizations, and how do other information sources rate? Is professional journalism more or less accurate than everything else on the internet? These all seem like important questions, so I’ve been poking around, trying to figure out what we know and don’t know about the accuracy of our news sources. Meanwhile, the online news corrections process continues to evolve, which gives us hope that the news will become more accurate in the future.

Accuracy is a hard thing to measure because it’s a hard thing to define. There are subjective and objective errors, and no standard way of determining whether a reported fact is true or false. But a small group of academics has been grappling with these questions since the early 20th century, and undertaking periodic news accuracy surveys. The results aren’t encouraging. The last big study of mainstream reporting accuracy found errors (defined below) in 59% of 4,800 stories across 14 metro newspapers. This level of inaccuracy — where about one in every two articles contains an error — has persisted for as long as news accuracy has been studied, over seven decades now.

With the explosion of available information, more than ever it’s time to get serious about accuracy, about knowing which sources can be trusted. Fortunately, there are emerging techniques that might help us to measure media accuracy cheaply, and then increase it. We could continuously sample a news source’s output to produce ongoing accuracy estimates, and build social software to help the audience report and filter errors. Meticulously applied, this approach would give a measure of the accuracy of each information source, and a measure of the efficiency of their corrections process (currently only about 3% of all errors are corrected.) The goal of any newsroom is to get the first number down and the second number up. I am tired of editorials proclaiming that a news organization is dedicated to the truth. That’s so easy to say that it’s meaningless. I want an accuracy process that gives us something more than a rosy feeling.

This is a long post, but there are lots of pretty pictures. Let’s begin with what we know about the problem.

An error in every other story

To measure news accuracy, you need a process for counting errors in published stories. This process has to be independent of the original reporting, otherwise you can’t learn anything about the story that the reporter didn’t already know. Real world reporting isn’t always clearly “right” or “wrong,” so it will often be hard to decide whether something is an error or not. But we’re not going for ultimate Truth here, just a general way of measuring some important aspect of the idea we call “accuracy.” In practice it’s important that the error counting method is simple, clear and repeatable, so that you can compare error rates of different times and sources.

The first systematic measurements of media accuracy approached these problems by asking the story’s sources to find errors in finished articles. In 1936 Mitchell V. Charnley of the University of Minnesota “mailed 1,000 news items clipped from three Minneapolis dailies to persons named in the stories, asking for their perceptions of inaccuracies,” according to a description in a later similar study (Charnley’s original paper isn’t online, boo.) This method of asking the sources whether the story is correct has some obvious shortcomings, and sources have their own agendas. However, it’s good at detecting basic errors of fact, such as incorrect names, dates, places, figures, occupations, etc. And because (roughly) this same methodology has been used in media accuracy studies ever since then, it’s possible to produce (rough) comparisons of accuracy over time.

| Year | Investigator | Number of stories | Errors per story | Percent with errors |

| 1936 | Charnley | 591 | .77 | 46% |

| 1965 | Brown | 143 | .86 | 41% |

| 1967 | Berry | 270 | 1.52 | 54% |

| 1968 | Blankenburg | 332 | 1.17 | 60% |

| 1974 | Marshall | 267 | 1.12 | 52% |

| 1980 | Tillinghast | 270 | .91 | 47% |

| 1999 | Maier | 286 | 1.13 | 55% |

| 2005 | Maier/Meyer | 3,287 | 1.36 | 61% |

This table is from Scott R. Maier’s 2005 paper “Accuracy Matters: A Cross-Market Assessment of Newspaper Error and Credibility,” which is both the largest and most recent news accuracy survey, and absolutely required reading for anyone with an interest in the subject. Maier checked 4,800 consecutive articles across 14 American newspapers, and,

This study’s central finding is sobering: More than 60% of local news and news feature stories in a cross-section of American daily newspapers were found in error by news sources, an inaccuracy rate among the highest reported in nearly seventy years of research, and empirical evidence corroborating the public’s impression that mistakes pervade the press. In about every other article, sources identified “hard” objective errors.

Maier and others counted both simple “objective” errors of fact and “subjective” errors such as over-emphasis or omission of an aspect of the story. Subjective errors are much fuzzier category and newsmakers are not necessarily neutral. But, says Maier,

Subjective errors, though by definition involving judgment, should not be dismissed as merely differences in opinion. Sources found such errors to be about as common as factual errors and often more egregious [as rated by the sources.]

But subjective errors are a very complex category, so for today let’s not count them at all. Purely “objective,” straightforward errors of fact were found in 48% of the stories Maier checked, whereas 61% of stories had errors of either type. There seems to be no escaping the conclusion that, according to the newsmakers, about half of all American newspaper stories contained a simple factual error in 2005. And this rate has held about steady since we started measuring it seven decades ago.

Maier’s work is amazing, and you should go read it. He also investigates which types of errors are most common (top three: misquotation, inaccurate headline, numbers wrong) and how error rate affects perceived story and newspaper credibility, and includes a fantastic bibliography of previous accuracy work. But all of this is still a very limited glimpse. The figures in the table above cover only newspapers, not online news sources, or television and radio. And it looks only at “old media” news organizations, not digital-only newsrooms, social media, and blogs. In the end, as extensive as academic accuracy research is, we only have accuracy measurements for a handful sources at a few points in time. This type of work is not useful for ongoing evaluation of accuracy strategies within a newsroom, and it doesn’t provide consumers with broad enough information to make good choices about where they get their news.

Continuous error sampling

One of the major problems with previous news accuracy metrics is the effort and time required to produce them. In short, existing accuracy measurement methods are expensive and slow. I’ve been wondering if we can do better, and a simple idea comes to mind: sampling.

The core idea is this: news sources could take an ongoing random sample of their output and check it for accuracy — a fact check spot check. Stories could be checked for errors by asking sources in the traditional manner, or through independent verification by a reporter who did not originally work on the story. I’m imagining that one person could check a couple stories per day in this fashion. Although this isn’t much, over time these samples will add up to an ongoing estimate of overall newsroom accuracy.

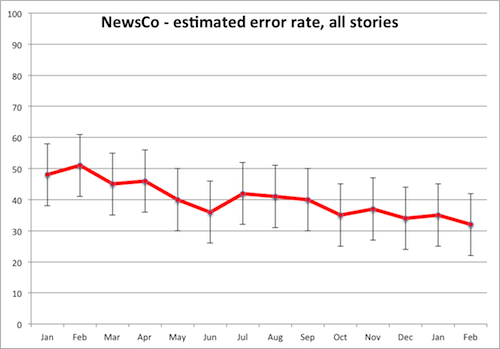

Standard statistical theory tells us what the error on that estimate will be for any given number of samples (If I’ve got this right, the relevant formula is standard error of a population proportion estimate without replacement.) At a sample rate of a few stories per day, daily estimates of error rate won’t be worth much. But weekly and monthly aggregates will start to produce useful accuracy estimates. For example, if a newsroom produces 1,000 stories per month and checks 60 of those — two per day — the error on the monthly estimate will be about ±10% (95% CI.) Or you could average three months of data and get an accuracy estimate to within ±6%. The result would be a graph like this:

This graph shows that NewsCo’s ongoing accuracy efforts are effective: the estimated error rate has decreased from about 50% to 35% over the course of a year. Given the margins of error on the estimates, this is a statistically significant difference indicating a real improvement, not just sampling noise. I would really like to see a news source that displayed an accuracy graph like this on their site. Otherwise, how can anyone really claim that they’re getting the facts right? Or even claim that they’re more accurate than a random blogger?

Fixing as many errors as possible

Meanwhile, the online corrections process is evolving. I now know of four different news outlets that have a “report an error” link or form on every story: The Washington Post, the Huffington Post, the Register Citizen of Lichfield County, CT, and the Daily Local of Chester County, PA. The MediaBugs project can also be used to report errors on any other news site.

Asking your users to report inaccuracies strikes me as a fabulous idea, and likely very productive (see: “someone is wrong on the internet!“) I have no knowledge of the quantity of errors submitted using these forms, or how the corrections process works. My suspicion is that each submitted correction sends an email to some hapless Engagement Editor who than has to cull the reports and route each plausible error to the story’s original reporter, if that reporter can be bothered to deal with audience feedback. (Excuse my snark, but more than one seasoned hack has told me how much they hate the idea that responding to users might be part of their job. Thankfully this attitude does seem to be on the way out.)

This very manual error correction system won’t scale. We can do better by asking the users to help filter error reports. It would be straightforward to implement a user-viewable queue of submitted errors for each story. Then the user who spots an error could a) first check to see if it’s already been reported, which would cut down duplicate reports, and b) vote on the severity of existing error reports. The idea is to do collaborative filtering on error reports, so that the most serious and plausible come to the attention of the corrections editor first. Users could be encouraged to submit supporting evidence of the error, in the form of URLs to primary sources, by automatically giving precedence to items which include links.

I would really like to see the day when every news story on every device includes a “submit addition or correction” button. And once the corrections process exists, we can start looking at the data it generates. One goal would be to increase the efficiency of the corrections process in catching errors. That will drive the number of detected errors up, which might be very scary for newsrooms which are in the habit of pretending that every story is perfect. No newsroom wants to be the first to let the audience see that half of their stories contain a factual error, even if most of those errors are going to be minor. And yet, if decades of news accuracy research are to be believed, this is inevitable, because those errors are already there across the industry, silent. This makes me suspect that good corrections processes — real, web-native, efficient crowd-sourced corrections — will not be quickly adopted.

But even if you were excited to get real corrections going, how would you know if your process was really catching all of the errors?

Combining accuracy and corrections measures

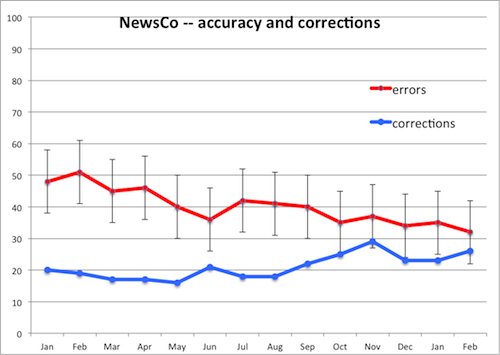

Suppose a newsroom was doing random samples of accuracy, and monitoring the number of errors corrected through the user-submission process and all other correction routes. Then we could plot errors corrected versus (estimated) errors found, like this:

A newsroom monitoring its accuracy and corrections processes in this way would have two goals. First, drive errors down, perhaps by instituting an accuracy checklist, like this one from Steve Buttry. Second, drive corrections up by making it easy and rewarding for users to submit corrections, building better error queuing and filtering systems, assigning more staff resources to corrections, etc. The goal is to have the blue line and the red line meet, meaning that all errors are rapidly corrected.

Used internally, this sort of chart could give a quantitative understanding of how well a newsroom is doing in terms of accuracy, or at least those aspects of the concept of “accuracy” that are well-captured by the metrics. One could even break it out by desk to see if certain parts of the newsroom are over- or under-performing — or perhaps we’ll learn that certain types of reporting are just harder to get right. Proudly posted externally, this sort of chart would demonstrate a tangible commitment to accuracy, far better than any values statement. And if the metric could be standardized across the industry — in much the same way that, e.g. accountants have standardized their reporting — then we would finally be able to compare the ongoing accuracy of two news sources in an empirical way. I think there might be some real hope for standardized, fair accuracy metrics, because news organizations are obliged to fix the errors they find on an audit, and this will leave a trail in the revision history that serves as a public record of what a newsroom considers an “error” to be.

Accuracy is not what it used to be

The explosion of information sources combined with the decades long decline in media trust makes this a great time to dream up ways to increase accuracy in journalism. One approach goes like this: the first step would be admitting how inaccurate journalism has historically been. Then we have to come up with standardized accuracy evaluation procedures, in pursuit of metrics that capture enough of what we mean by “true” to be worth optimizing. Meanwhile, we can ramp up the efficiency of our online corrections processes until we find as many useful, legitimate errors as possible with as little staff time as possible. It might also be possible to do data mining on types of errors and types of stories to figure out if there are patterns in how an organization fails to get facts right.

There are some serious practical problems and gnarly missing details here. But think of the prize! I’d love to live in a world where I could compare the accuracy of information sources, where errors got found and fixed with crowd-sourced ease, and where news organizations weren’t shy about telling me what they did and did not know. Basic factual accuracy is far from the only measure of good journalism, but perhaps it’s an improvement over the current sad state of affairs: “aside from prizes, there really aren’t any other metrics for journalism quality.”

Jonathan,

Excellent research and thoughtful observations and suggestions on one of the most important issues in journalism. I should note that my accuracy checklist was inspired by Craig Silverman’s. Craig and Scott Rosenberg have a great idea with the Report an Error Alliance: http://reportanerror.org/

Hillaire Belloc – The Free Press <- Excellent read!

It’s posts like this that make me think that maybe journalism isn’t dying. Maybe journalism isn’t even invented yet. Perhaps the journalism of the 21st century will be to the 20th as chemistry is to alchemy.

BTW, the Wikimedia Foundation is also really interested in quantifying accuracy on Wikpedia. There is a stab at this with some new “rate this article” tools but I think we need to go a lot further.

Jonathan, this is a fantastic post, thank you for it.

I’m writing some thoughts in response and I noticed that your “sad state of affairs” link is busted — would be good to know where it’s supposed to point.

Hey, if you had a “report an error” button here I’d have used it 🙂

Scott– fixed the “sad state of affairs” link. Thanks!

Great post, Jonathan, thank you. This is another place where journalism schools might have a useful role to play. Having journalism students participate with local media to monitor accuracy could help newsrooms and simultaneously teach students new practices for dealing with errors.

Dear Blogger,

Hi! This is an invitation from the Berkman Center for Internet & Society at Harvard University and Global Voices Online to participate in a survey we’re carrying out jointly. We’re asking questions about online safety for bloggers in the Middle East and North Africa, and we need your help!

We are particularly interested in learning about your experiences with social media, the steps you take to protect your privacy online, and your perceptions of online threats. We are sending surveys to approximately 600 influential bloggers throughout the region in the hopes of learning more about how bloggers view and approach the issue of online safety.

The survey will take approximately 25 minutes to complete. We will not share personal information — or the fact that you’ve participated in this survey — with anyone else, and you’ll be the first to know when we release the results of the survey. Will you help us?

Survey link: http://new.qualtrics.com/SE?Q_SS=4SY4Ww8dU2lXqUA_3lL0Mo11yPXn2lu

With appreciation,

The Berkman Center & Global Voices Online

This is a wonderful piece. I came across it when I was trying to research how to measure an online article in terms of inches. We all know how to measure a print article, but once its placed online and you don’t have a hard copy to measure how do you come up with the stories length?

Thank you so much on your thorough definition of Balance in journalism.

I read this paragraph fully regarding the resemblance of most

recent and preceding technologies, it’s remarkable article.

For professional and home chefs, the primary benefit of using

this type of cookware is the even heat distribution it offers.

All furniture fittings are separately sold in the do-it-yourself stores and through mail order, however the furniture fittings supplied with purchased furniture are matching.

The price of the product is quite reasonable in comparison to the contemporary

market price.

Confira nessa página como Somatodrol vai te ajudar a ganhar mais massa muscular e mais definição muito mais rápido.

Pretty great post. I simply stumbled upon your weblog and wished to mention that I have really loved browsing your blog

posts. After all I’ll be subscribing for your rss feed

and I’m hoping you write again very soon!

Hello there I am so happy I found your web site, I really found you by accident, while I was searching on Aol for something

else, Nonetheless I am here now and would just like to say thanks for a incredible post and

a all round interesting blog (I also love the theme/design), I don’t

have time to read through it all at the minute but I have saved

it and also included your RSS feeds, so when I have time I will be back to

read more, Please do keep up the awesome work.

If you would like to get a great deal from this piece

of writing then you have to apply these techniques to your won weblog.

Awesome! Its genuinely remarkable piece of writing, I have got

much clear idea concerning from this paragraph.

We’re a gaggle of volunteers and opening a new scheme in our community.

Your web site provided us with valuable info to work on. You’ve performed an impressive task

and our entire group will likely be thankful to

you.

первые симптомы заражения генитальным герпесом http://mikekuhlmann.xyz/index.php?id=840 смотреть фильм новинки паразиты трейлер

g6687hjhk7