Corruption in the classic sense is when a politician sells their influence. Quid pro quo, pay to play, or just an old fashioned bribe — whatever you want to call it, this is the smoking gun that every political journalist is trying to find. Recently, data journalists have begin to look for influence peddling using statistical techniques. This is promising, but the data has to be just right, and it’s really hard to turn it into proof.

To illustrate the problems, let’s look at a failure.

On August 23, the Associated Press released a bombshell of a story implying that Clinton was selling access to the US government in exchange for donations to her foundation. I’m impressed by the AP’s initiative in using primary documents to look into a serious question of political ethics. But this is not a good story. It’s already been criticized in various ways. It’s the statistics I want to talk about here — which are, in a word, wrong. (And perhaps the AP now agrees: they changed the headline and deleted the tweet.) Here’s the lede:

At least 85 of 154 people from private interests who met or had phone conversations scheduled with Clinton while she led the State Department donated to her family charity or pledged commitments to its international programs, according to a review of State Department calendars

There’s no question this has the appearance of something fishy. In that sense alone, it’s probably newsworthy. But the deeper question is not about the appearance, but whether there were in fact behind the scenes deals greased by money, and I think that this statistic is not nearly as strong as it seems. It’s fine to report something that looks bad, but I think news organizations also need to clearly explain when the evidence is limited — or maybe not make an ambiguous statistic the third word in the story.

So here, in detail, are the limitations of this type of data and analysis. The first problem is that these 154 are a limited subset of the more than 1700 people she met with. It only counts private citizens, not government representatives, and this material only covers “about half of her four-year tenure.” So this isn’t really a good sample.

But even if the AP had access to Clinton’s complete calendar, counting the number of Clinton foundation donors still wouldn’t tell us much. There would still be no way to know if donors had any advantage over non-donors. If “pay to play” means anything, it must surely mean that you get something for paying that you wouldn’t otherwise get. In this case, that “something” is a meeting with the Secretary of Sate.

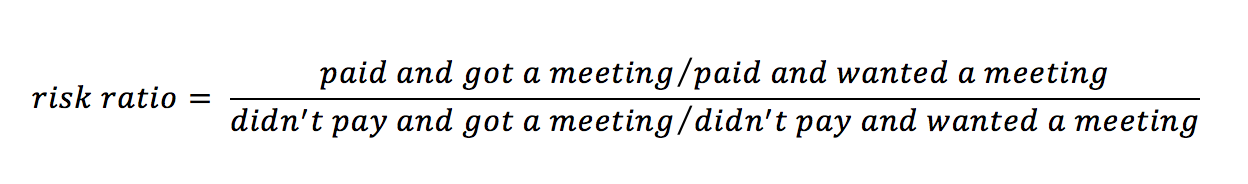

The simplest way to approach the question of advantage is to use a risk ratio, which is normally used to compare things like the risk of dying of cancer if you are and aren’t a smoker, or getting shot by police if you’re black vs. white. Here, we’ll compare the probability that you’ll get a meeting if you are a donor to the probability that you’ll get a meeting if you aren’t a donor. The formula looks like this: This summarizes the advantage of paying in terms of increasing your chances of getting a meeting. If 100 people paid and 50 got a meeting, but 1000 people didn’t pay and 500 of those still got a meeting, then paying doesn’t help get you a meeting.

This summarizes the advantage of paying in terms of increasing your chances of getting a meeting. If 100 people paid and 50 got a meeting, but 1000 people didn’t pay and 500 of those still got a meeting, then paying doesn’t help get you a meeting.

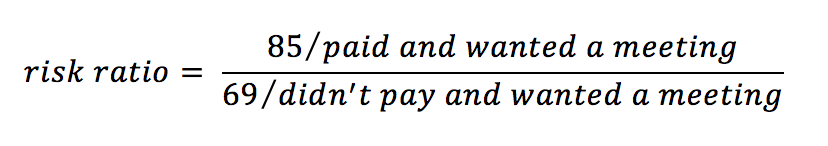

The problem with the AP’s story is that there was no way for them to compute a risk ratio from meeting records. Clinton met with 85 people who donated to her foundation, and 154-85 = 69 who did not. This gives us:

We’re still missing two numbers! We can’t compute the advantage of paying because we don’t know how many people wanted a meeting, whether they paid, and whether or not they got a meeting. In other words, we need to know who got turned down for a meeting. The calendars and schedules that reporters can get don’t have that information and never will.

Can we conclude anything at all from the AP’s data? Not much. We can say only a few fairly obvious things. If many more than 85 people donated, then the numerator gets small and there appears to be less advantage. On the other hand, if many more than 69 people wanted a meeting but didn’t donate, the denominator gets small and it looks worse for Clinton.

We might be able to get some idea of who got turned down by looking at the Clinton Foundation contributors list. That page lists 4277 donors who gave at least $10,000. (Far more gave less, but you have to figure that a meeting costs at least some minimum amount.) Reading through the list of donors, almost all of them are private citizens, not governments. If we imagine that any substantial number of those 4277 donors hoped for a meeting with Clinton, the 85 private donors who did meet with her are at most 2% of those who tried to get a meeting. The numerator in the relative risk formula is small. The denominator might be even smaller if many thousands of people tried to get a meeting using exactly the same channels as the donors but ¯\_(ツ)_/¯ we’ll never know.

In other words, there is no way of finding evidence of “pay to play” by looking only at who got to “play,” without also looking at who got turned down.

The inability to calculate a risk ratio is a problem with many types of data that journalists use, but not others. Imagine looking for oil industry influence in a politician’s voting records. If you have good campaign finance data you know how much the oil companies donated to each politician. You also know how each politician voted on bills that affect the industry, so you know when oil money both did and didn’t get results. Meeting records are not like this, because they don’t record the names of the people who wanted to meet with a politician but didn’t.

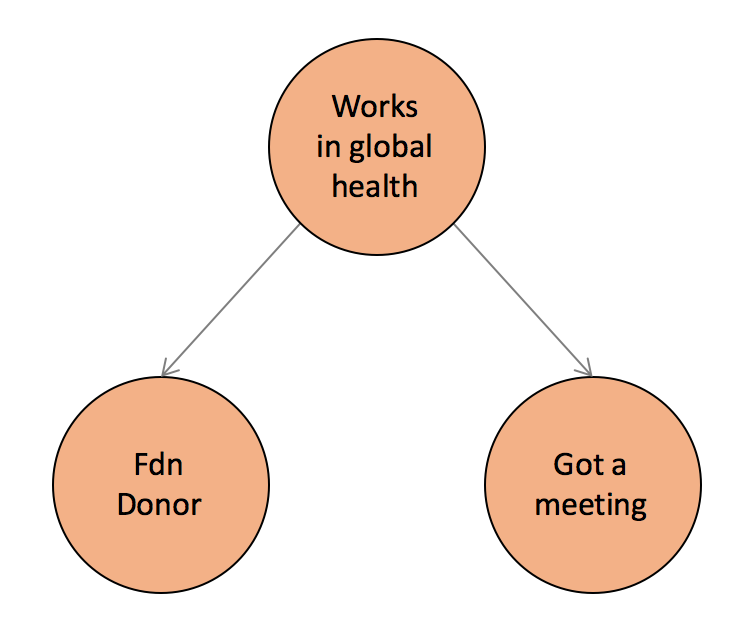

Then there’s the problem of proving cause. Even when you can compute a relative risk, and the data suggests that more donors than non-donors got a meeting, corruption only happened if the payment caused the meetings. There are all sorts of possible confounding variables that will cause the risk ratio to overestimate the causal effect, that is, overestimate what money buys you. What sort of factors would cause someone both to meet with Clinton and donate to the Clinton Foundation, which does mostly global health work? All sorts of high-level folks might have business on both fronts. For example, there are plenty of people working in global health at the international level, coordinating with governments and so on.

Of course, people working together without the influence of money between them can still be doing terrible things! That is a different type of crime though. It’s not the pervasive money-as-influence-in-politics story that data journalists might hope to find statistically, and that’s the kind of story the AP was after.

Unfortunately, most people don’t think about the influence of money in this way. They only see evidence of an association between money and outcomes, without thinking about 1) those who wanted something and never got it, and 2) factors that would align two people without one paying the other, like shared goals. It’s all guilt by association.

In short, political science is hard and we can’t conclude very much from looking at meetings and donors! Yet I suspect it will still be quite difficult for many people to accept that the AP story is largely irrelevant to the question of whether Clinton was selling access. It is the association that seems suspicious to us, not the relative advantage. Suppose we know that half of the people who got promoted brought a bottle of wine to to the boss’s garden party. That means nothing if half of the company brought a bottle of wine to boss’s the garden party. But suppose instead that half of the people who got promoted slept with the boss. Now that seems like an open and shut case of “pay to play,” no? Not if the boss also slept with half of the rest of the employees. While that would be wildly inappropriate, it’s not trading favors.

It seems that our perception of the association between acts and outcomes depends far more on our judgment of the act than whether or not it actually gives you an advantage. Yet “advantage” is the whole idea of quid pro quo.

Which is not to say that Clinton wasn’t influenced by donations to her foundation. Who can say that it was never a factor? In fact she wouldn’t even need to give actual advantage to donors. Just the appearance, promise, or hope of advantage might be enough to shake people down, and that could be called corruption too. All I’m saying here is that we’re not going to be able to see statistical evidence of pay-to-play in meeting records.

We can, however, look in the data for specific leads about specific fishy transactions. To the AP’s credit much of the long story was exactly that, though having a meeting about helping a Nobel Peace Prize winner keep his job at the head of a non-profit microfinance bank may not feel like much of a smoking gun.

The AP, being the AP, was extremely careful not to make factually incorrect statements. It’s merely the totality of the piece that implies malfeasance. Or not. Let the readers make up their own minds, as editors love to say. I find this a monumental cop out, because the process of inferring corruption from the data is subtle! Readers will not be equipped to do that, so if we are using data as evidence we have to interpret it for them. The story could have, and in my opinion should have, explained the limitations of the data much more carefully. The statistics are at best ambiguous, and at worst suggest that donors got no special treatment (if you compare to the total number of donors, as above.) The numbers should never have been in the lede, much less the headline.

But then, would there have been a story? Would have, should have, the AP run a story saying “here are some of the people Clinton met with who are also donors?” That’s not nearly as interesting a story — and that is its own kind of media bias. The tendency is towards stronger results, even sensational results. Or no story at all, if not enough scandal can be found, which is straight up publication bias.

The broader point for data journalists is that it is extremely difficult to prove corruption, in the sense of quid pro quo, just by counting who got what. To start with, we also need data on who wanted something but didn’t get it, which is often not recorded. Then we need an argument that there are no important confounders, nothing that is making two people work together without one paying the other (of course they could still be co-conspirators doing something terrible, but that would be a different type of crime.) The AP counted only those who got meetings and didn’t even touch on non-corrupt reasons for the correlation, so the numbers in the story — the headline numbers — mean essentially nothing, despite the unsavory association.