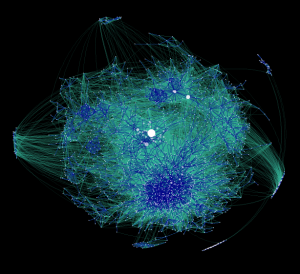

How much overlap is there between the web in different languages, and what sites act as gateways for information between them? Many people have constructed partial maps of the web (such as the blogosphere map by Matthew Hurst, above) but as far as I know, the entire web has never been systematically mapped in terms of language.

Of course, what I actually want to know is, how connected are the different cultures of the world, really? We live in an age where the world seems small, and in a strictly technological sense it is. I have at my command this very instant not one but several enormous international communications networks; I could email, IM, text message, or call someone in any country in the world. And yet I very rarely do.

Similarly, it’s easy to feel like we’re surrounded by all the international information we could possibly want, including direct access to foreign news services, but I can only read articles and watch reports in English. As a result, information is firewalled between cultures; there are questions that could very easily be answered by any one of tens or hundreds of millions of native speakers, yet are very difficult for me to answer personally. For example, what is the journalistic slant of al-Jazeera, the original one in Arabic, not the English version which is produced by a completely different staff? Or, suppose I wanted to know what the average citizen of Indonesia thinks of the sweatshops there, or what is on the front page of the Shanghai Times today– and does such a newspaper even exist? What is written on the 70% of web pages that are not in English?

We all live on the same physical planet, but the information worlds we inhabit must be vastly different. This are many reasons for this other than language, but language alone is enough to isolate humanity from itself.

And so, my question: how many islands are there in our multi-cultural information space, and how are they connected? I am willing to bet that a full-scale web map would show several large networks in the main languages of the web — English, Chinese, Spanish, Japanese, German, etc. — but few connections between them, web sites frequented by bilingual or bi-cultural individuals, who after all are the true gateways between cultures. The structure of the interconnections might tell us something about the relationships between cultures, and the actual number of links might provide some measure of how close or how far apart we actually are. The individual URLs themselves would also be extremely valuable information, representing high-bandwidth links between cultures, the trans-occeanic fiber between continents in the infosphere.

There is a second geography to the world that we’ve never seen. I don’t even know what I’m missing.

Creating such a map would be a trick, but by no means out of the reach of an academic project or a small company. Google says there are currently over one trillion (10^12) unique web pages (for their particular definition of “unique”, which is more complex than it might seem.) Unlike a search engine, a language-based web map does not require the full contents of every page, merely the outgoing URLs and a discrete categorization of the language (which can be automatically determined even without any document meta-data.) Assuming that each URL is assigned a unique 32 bit ID, another 32 bits for language and other info, and then links to an average of 20 other pages (estimates vary), this is about 100 terrabytes of data — or perhaps $15000 worth of storage at current prices. This index could be created from a fresh crawl, or by parsing an existing one, such as from the folks at the brand new and very awesome DotBot open index of the web.

The next step would be to generate the visualization of such a massive data set. The complete graph could be laid out in two or three dimensions using existing clustering methods. The resulting map could be traversed using GPU-accelerated rendering techniques for very large data sets, probably after some sort of hierarchical pre-processing that produces proxies for zoomed-out views of the network. A usuable UI would be crucial; the entire map needs to be navigable at multiple scales and composed of live, hyperlinked objects. The right visualization also depends on what you are trying to discover; ultimately, there can be no single map because the choice of visualization is dependent upon usability and aesthetics, as the huge variety of beautiful maps at Visual Complexity demonstrate.

The analysis could go much deeper with more computing power. Machine translation is currently poor, but it is probably good enough to detect whether one document is a translation of another. With this capability, we would actually be able to quantify the percentage of (public) textual information that makes it from one language into another and identify the key organizations that act as conduits. Further study might reveal fascinating things, such as selection biases in the types of news or information that get translated. The implications for differences in belief between cultures are obvious.

Yet even a “links only” data set could still answer some highly revealing questions, such as “what percentage of web sites are visited by people from multiple cultures?” or even “what is the best gateway between Polish and English film reviews?” This could be done without visualization, but it would be a mistake not to draw the actual maps. Not only do pictures engage our spatial reasoning in a way that raw bits never can, but such a map would re-make an obvious point that is too often lost: in terms of communication between cultures, the world is not nearly as small or interconnected as we’d like to think it is.

I think that what you composed was very reasonable.

But, what about this? what if you composed a catchier post title?

I mean, I don’t want to tell you how to run your website,

but what if you added a headline that grabbed a person’s

attention? I mean How Many World Wide Webs Are There?

| Jonathan Stray is a little vanilla. You could look at Yahoo’s front page and note how they write article headlines to get people to open the links.

You might add a related video or a pic or two to get people interested about what

you’ve written. In my opinion, it could make your posts a little livelier.