UPDATE: Debrouwere continues the conversation with a response to the key points here, in the comments to his original post.

Dutch journalist/coder Stijn Debrouwere has written a very thorough post describing the ways in which standard tags, like the ones on this blog or on Flickr, fall short when applied to news articles. There are lots of things we might like to know about a story, such as where and when it happened and who was involved. This additional information, sort of like the index to a book, is known as “metadata”, and there is within the online journalism community a great call for its use, including by Debrouwere:

Each story could function as part of a web of knowledge around a certain topic, but it doesn’t.

So here’s a well-intentioned idea you’ve heard before: journalists should start tagging. Jay Rosen insists that “Getting disciplined and strategic about tagging” may be one way professional journalism separates itself from the flood of cheap content online.” Tags can show how a news article relates to broader themes and topics. Just the ticket.

News metadata is a major topic, and many people have speculated deeply about the value of creating news metadata at the time of reporting, such as the ever-sarcastic Xark and the thoughtful Martin Belam who writes about why “linked data” is good for journalism. But I’m going to respond to Debrouwere because I read him today, because he has lovely diagrams that explain his good ideas, and because, in criticizing “tags” as a form of metadata, I think he misses some very important points.

And he’s not alone. My sense is that many of the coder-journalists of today have not learned from the mistakes of generations of technically-minded people who wished to talk about the world in more precise ways.

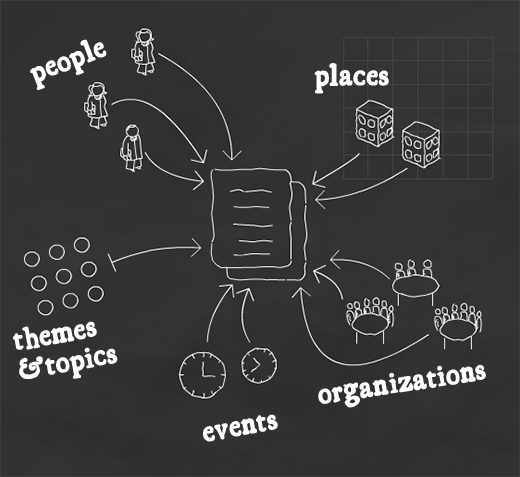

Moving forward from simple tagging, Debrouwere imagines more sophisticated annotation schemes that start to pick up on what the tags actually mean. For starters, the tags could be drawn from separate “vocabularies.” Does a tag refer to a person, or a place, or perhaps an event? Debrouwere uses the following picture, which I’m going to borrow here because it explains the idea so nicely:

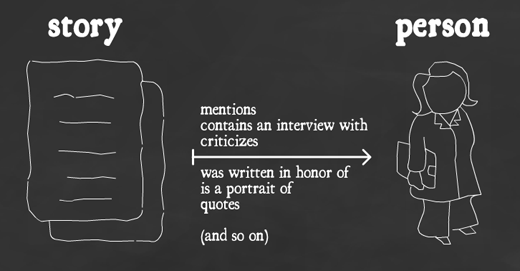

But, he says, we can get even more sophisticated. What did the story actually say? If it mentioned a person, what did it say about them? Was it an interview? A profile? Did it criticize them? Here’s the diagram he draws:

He imagines using this information to perform chains of inferences, like so:

Barack Obama belongs to the Democratic Party and he’s from Chicago. If we tag an article with Barack Obama, it’s likely that the article also has something to do with the Democratic Party. If we’ve specified that the article is about Obama, and we’ve specified that Obama is part of the DP, the system now has all the necessary information to suggest our article about Obama as a possibly interesting related read on the topical page for the democratic party, even if we didn’t explicitly indicate that link.

First of all, note that this sort of thing is already possible, quite often, using tags as they exist today. Simple analysis of co-tagging information will tell us that Obama is related to the Democratic party, because many articles will be tagged with both. Which is not to say that encoding such relationships explicitly isn’t a good idea. We can do this sort of thing using “triples,” which are fundamental to the nascent evolution of the internet into a web of “linked data”:

-

<Barack Obama> belongs-to-party <Democratic Party>

Here, “Barack Obama” is an object from, say, the “people” vocabulary, and “Democratic Party” is from, perhaps, the “political party” vocabulary, or maybe just from “groups.” Essentially, these are tags that have been pre-categorized. The relationship between the two is expressed by the “belongs-to-party” predicate.

But I argue that this is a rigged example. The world is normally much more messy.

“Are you now or have you ever been a member of the communist party?” was a killer question in its day, with complex answers like “I only attended one meeting.” And if parsing politician’s statements was easy, then Politifact wouldn’t devote entire articles to the question of whether a single sentence was true or false. Further, they distinguish between different “grades” of truth, like “mostly true.” Mathematical logic — which is what the sort of news inferences that Debrouwere and others discuss is based on — doesn’t deal with “mostly true.”

The problem is that the world is not neatly categorizable.

Don’t get me wrong — vocabularies and relationships (ala linked data triples) are surely a good idea. But they have some serious drawbacks that relate to very deep issues in knowledge representation.

Debrouwere says, “Events happen at a certain place and at a certain time.” Sometimes. For a house fire or a shooting, maybe, but how “long” were the post-election protests in Iran last summer? They continued at varying intensity for several days, then flared up weeks later. Was that one protest or two? And what about a Facebook protest that gathered supporters over the course of a week? “When” and “where” did that happen?

Or, take the example of describing what an article says about someone. How do we decide when a story “criticizes” someone? There will always be boundary cases — lots of them in professional reporting. How do we ensure inter-rater reliability? Can we extract any real data from analyses of this tag if we have no other reference points with which to interpret it?

Something is always lost in categorization. That is the point! To say that two things are like one another is to ignore their differences, for the purposes of the present discussion. Unfortunately, what can safely be ignored depends on the discussion. Simple date and place notations work for some purposes, and fail miserably for others. They are not very rich, and even worse, we don’t know exactly how much has been lost in each case. Knowledge of that error is sometimes critical, especially when trying to make chains of inferences, where errors multiply.

The reason we use text for reporting is that it’s good at representing these sorts of ambiguities. Strict adherence to the religion of finite relationship vocabularies leads one to believe that the world can be modeled in first-order logic (predicate logic), and this just isn’t true. Chains of automatic inference fail very quickly when applied even to very simple “real world” situations. The Artificial Intelligence research community went down that road for decades and found it really problematic, which is why we’re now seeing the rise of “statistical” AI techniques, such as statistical machine translation. This approach tries to find patterns in vast amounts of data rather than working out hard underlying rules; the categorization comes after you look at all available data, not before.

And therein lies the great virtue of tags: they are just about the simplest possible way of saying something, and don’t imply or require any particular inferential framework. They’re much harder to get wrong than more complex associations, and they make sense only in aggregate, and this makes them much more robust than predicate sentences. A tag says only, “there’s some association.” Full stop. I find this ambiguity a virtue. The meaning comes out of the relationships between the tags, articles, and users. Meaning is always relative, and tags force us to understand this, because there’s nothing else to go on.

Tags allow (or force) what we might call the “Google solution”: let humans describe it in a way that makes sense to them, then sort it all out later algorithmically. There are limits to this, of course, which is why metadata has value. But ultimately, computers serve humans, so the Google solution will always be a win when it is possible.

Linked data will be valuable because of the links. I predict that its main use will be as a sort of “super tagging” system: we still have “tags” in the linked data world, it’s just that they’re now all “uniform resource identifiers” that are visible to everyone on the web. This means that tags can be shared between systems and maintained by communities, which only makes them more powerful. In fact, this is exactly what we’re already seeing, with the Wikipedia-derived DBPepdia at the center of all those linked data “bubble diagrams.”

Linked data also supports predicates that say what the relationship between the tags is, like the “Barack Obama is a member of the Democratic Party” example. But I predict that these will be much less useful, offering almost none of the “machine understanding” that’s supposed to come with the semantic web. I don’t know what “understanding” means if not the ability to draw inferences of some sort, and predicates are just too fragile, too subject to mis-categorization, too limited to capture the rich relationships of the real world. I do believe that we’ll see amazing new “artificial intelligence”-like applications built on top of linked data, but they’ll be built statistically: they’ll ignore the predicates or use them only in special cases, or only in aggregate.

Having said all this, I am fully in support of adding better metadata to news stories. I believe the “entity recognition” performed by OpenCalais is valuable, and that carefully managed tag vocabularies are essential. Often “location” will be a genuinely useful tag, and I can see the possibility for some wonderful news mashups.

But please, let’s not imagine that we can capture even the “essential” details of real journalism with any fixed vocabulary. And let’s not oversell the potential of machine reasoning or data-mining based on carefully-annotated news metadata.

We’re a very long way from understanding how to represent reality in machine-readable form.

For more on this topic, I recommend:

- “Ontology is overrated” by Clay Shirky

- “Metacrap” by Cory Doctorow

- “What is a knowledge representation?” by Davis et al. at MIT

5 thoughts on “The world cannot be represented in machine-readable form”