How do we know that the work that journalists do accomplishes anything at all? And what does journalism do, exactly, beyond vague statements like “supports democracy” and trivial ones like “gives me movie reviews”?

I made this image a couple months ago to introduce the question at a conference. A reporter researches and writes a story. The first arrow represents the process that gets that story published. We understand that process quite well, and the internet makes publishing really cheap and easy. Then there’s a process that takes published, accurate information and turns it into truth and justice for all. That’s the part that’s fuzzy. In fact I don’t think we understand it at all. I call this “the last mile problem” in journalism — how does journalism actually reach people?

Journalists occasionally claim a scalp, such as by embarrassing a politician enough to force them to resign, or focussing attention on some issue long enough to get legislation passed. Journalism also theoretically informs citizens so they can vote responsibly, in the elections which happen every few years. As I’ve argued before, these are weak levers by which to shift society. I’m less interested in what journalism does in extraordinary times, and more interested in how the journalist’s work improves the day-to-day operation of a society, and the experiences of the people living in it.

It’s possible that much of the journalism we have is effective. Maybe the mere existence of consistent reporting on the machinations of the powerful keeps them in line, and we’ll only know what journalism really gave us when it disappears and civilization collapses into a mire of secrecy and corruption. Or maybe that’s already happened. How would we know? How can we tell whether journalism, as a local or a global endeavor, is doing better this year than last?

Other fields have goals

I like to hang around the international development community, and those people have real problems. People working in public health are charged with improving access to clean water or preventing the spread of HIV. Others try to get more girls into school, or to raise entire communities out of poverty.

There are lots of ways to attack such complex social problems. An NGO or a foundation or a UN organ could lobby local politicians, produce research reports, provide services directly to affected populations, or launch a public awareness campaign. The way in which an organization proposes to have an effect is called their “theory of change.” This is a term I hear frequently at gatherings of development workers, and from the staff of NGOs and international organizations. Such organizations must continually develop and articulate their theory of change in order to secure philanthropic funding.

Journalism has no theory of change — at least not at the level of practice.

I’ve taken to asking editors, “what do you want your work to change in society?” The answer is generally along the lines of, “we aren’t here to change things. We are only here to publish information.” I don’t think that’s an acceptable answer. Journalism without effect does not deserve the special place in democracy that it tries to claim.

The question of “what change should journalism produce” is hard because it is unavoidably a normative question, a question about how journalists envision a “better” world. At the moment, the field of professional journalism is mired in intense confusion about its role and the meaning of classic standards such as “objectivity.” This has obscured discussion of the field’s goals at a moment of great transition brought on by new communications technology, precisely the time when clarity is most needed.

It’s telling that discussions of journalism’s fundamentals frequently harken back to the great debate of Lippman vs. Dewey. That happened in the 1920s. This was not only before live television and before the internet, it was before bastions of modern reasoning such as statistical inference, the study of cognitive biases, and the social construction of knowledge were fully developed. Other fields have done much better in adapting to the philosophical and technological revolutions of the last century.

Medicine in general and public health in particular have become relentlessly evidence-based. It’s no longer enough to run anti-smoking ads; we now require those responsible for public health to show that their preferred method of behavior modification actually reduces disease. Meanwhile, marketers have rallied around the idea that purpose of their work is to get targeted individuals to do something, whether that’s purchasing a product or voting for a particular candidate. That may not be an appropriate goal for non-advocacy journalism, but marketing and public relations researchers have made very careful studies of communication, recall, and belief.

Similar concerns over how messages are received arise in many fields, from crisis communications to public diplomacy. But not in journalism. If journalism does not change action it must change minds, but the tools and language of belief change seem to be entirely missing from the profession.

Journalism as surveillance of ignorance

It used to be the job of an editor to decide what to publish. Maybe it is now the job of an editor to decide what needs to be known. These are not at all the same thing. They used to be, when nothing could be done with a story after the ink hit paper. The internet allows so much more — promotion within specific communities, feedback on readership and reception, conversation as opposed to oratory. And potentially, cheap techniques to determine what people already believe.

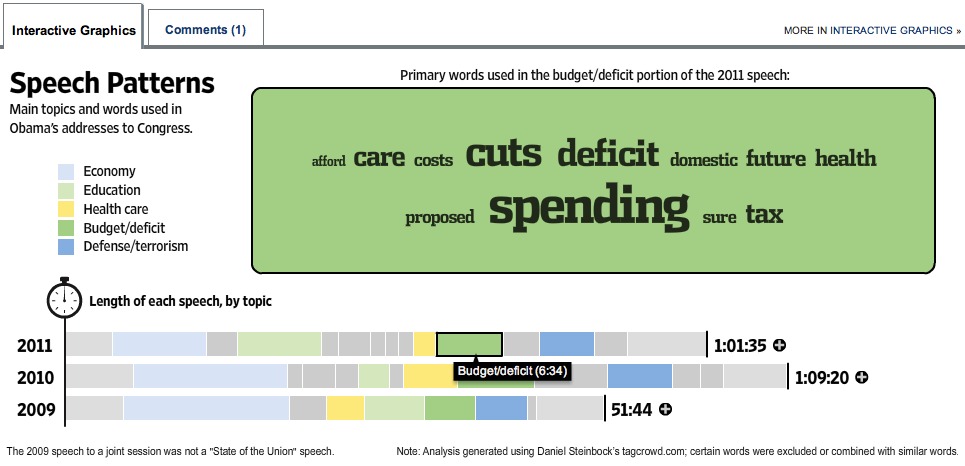

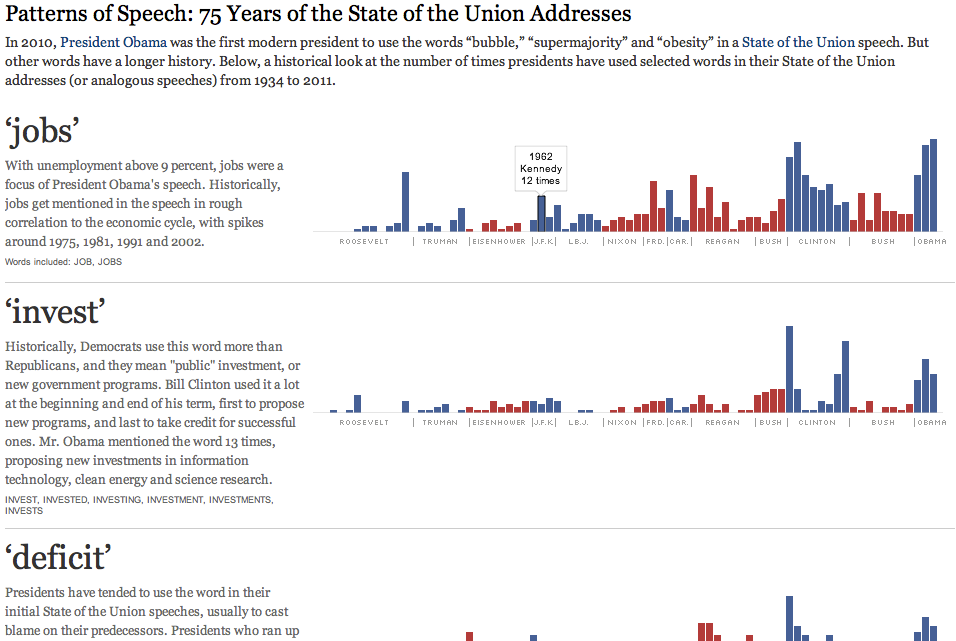

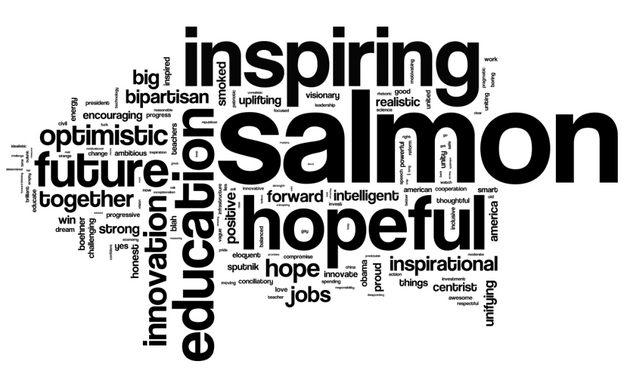

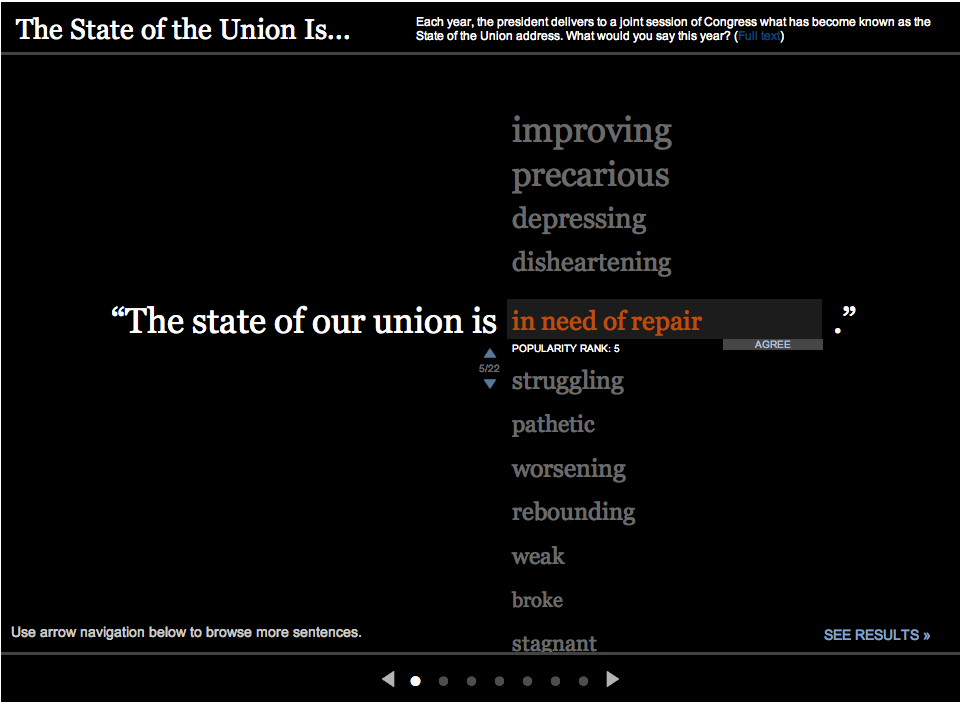

We should expect that users will largely be choosing for themselves what to read and view. That’s reality, and that’s fine, and systems that make it easy to satisfy curiosity are systems that will make us smarter (even though we’ll mostly use them for entertainment.) But I believe there will still be an identifiable set of common content, the few things that the public — or some targeted fraction of it — absolutely has to know to participate meaningfully in the civic issues of the day. This is more or less what editors put on the front page today. But rather than the headlines reflecting the most important events, perhaps they should reflect the most pernicious misconceptions. Good journalists already have some sense of this, and every so often we learn of an alarming gap in public knowledge. A majority of Americans believed for years that Saddam Hussein was linked to 9/11, for example. Today, most Americans don’t know what’s actually in Obama’s new health care laws. (I apologize again to my international readers for the US-centric examples; I’d love to hear of similarly woeful tales from other countries.)

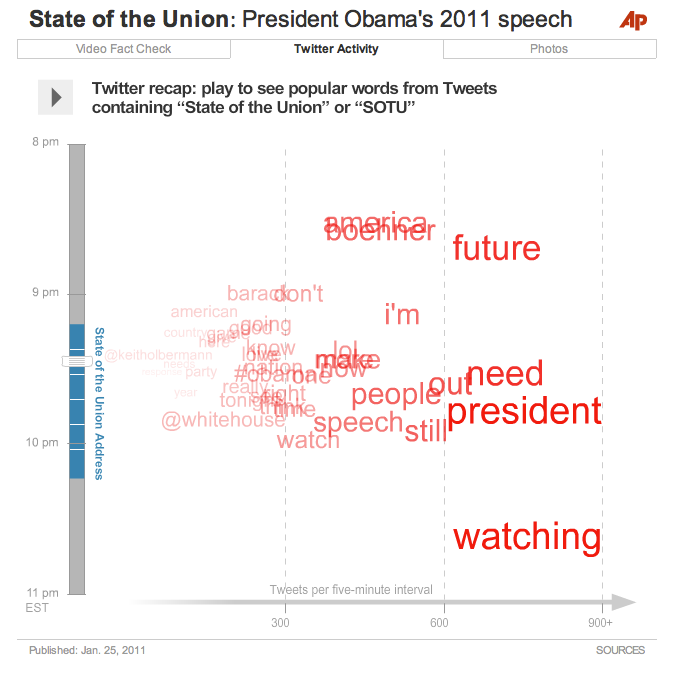

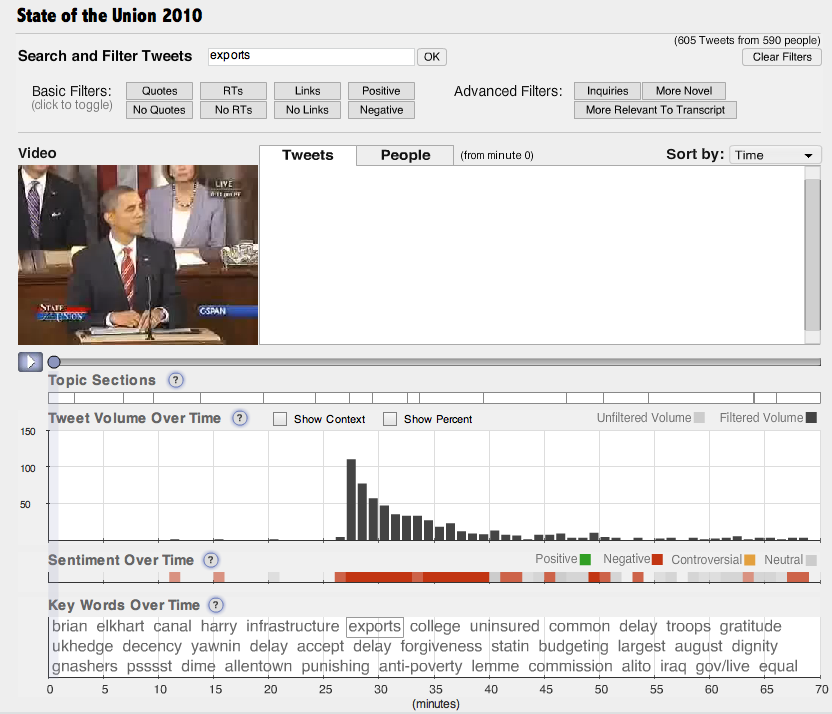

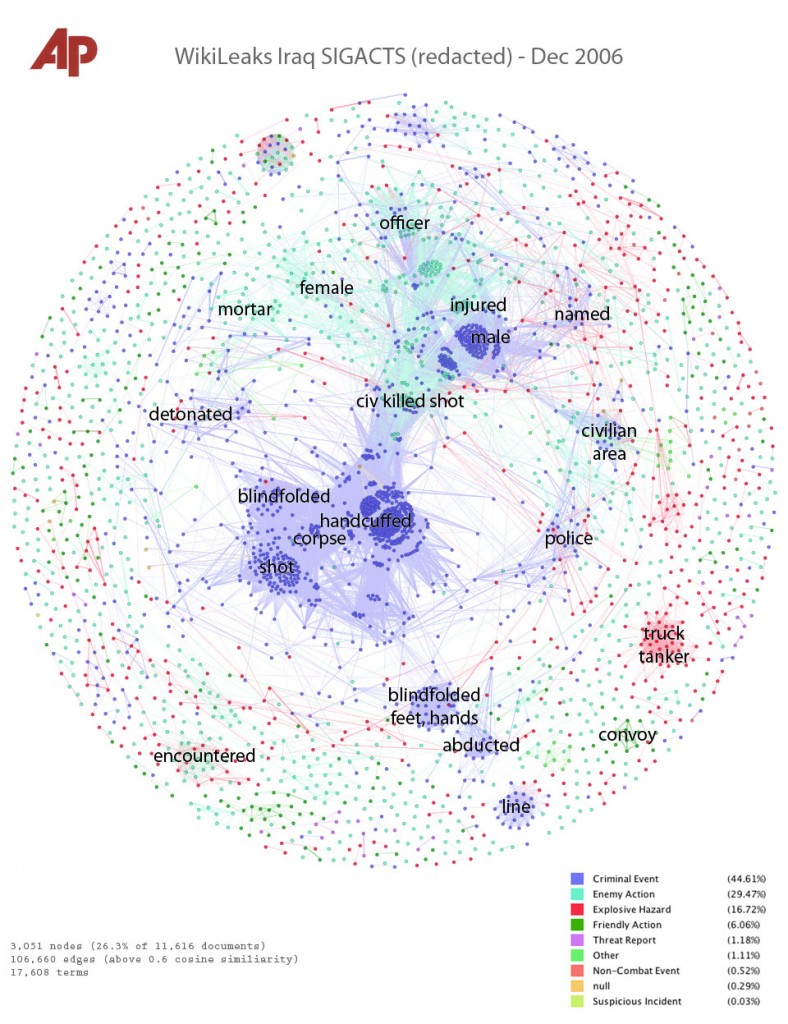

Combatting ignorance is harder than publishing. It’s my best guess for the second, mysterious arrow in the diagram above. Fortunately we also have new tools. We have reams and reams of data that people voluntarily put online, the “data exhaust” of entire societies. We also have old-fashioned public opinion polls, and their lightweight cousin online polls (though self-selection bias may render online surveys useless for all but the most casual work.) Somewhere in all this data and all this communication, it must be possible to figure out what it is that people actually believe — and where those beliefs are factually wrong in an uncomplicated way, precisely the way that an editor would say “that’s not true, we can’t print it.”

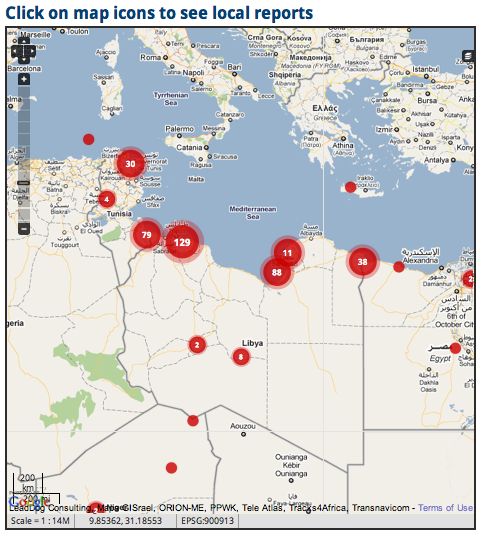

There are many possibilities for understanding the beliefs of an audience. I am particularly intrigued by opinion mapping, deliberative polling, and the attempts of UN Global Pulse to create data-driven societal monitoring systems. It may actually be possible to cheaply measure the state of public knowledge, which would also give us concrete metrics for improvement. We need new ways of thinking about the surveillance of ignorance, and we need software to implement them. But more than anything else, we need journalists attuned to what it is that people don’t know. Good journalists already are; they can see what is missing from discussion — whether that’s a question that no one has answered or a challenge to a prevalent belief — and do the hard work of adding it.

This effort applies at all scales. Each journalist has an audience or audiences, their communities of concern. Each could track what their audience already knows and believes. The job of the journalist, so conceived, is not merely to report the happenings, but to ensure that the audience is aware of and understands the most crucial of them. That won’t be easy. Aside from the challenges of determining what an audience already knows, people don’t like to be told they’re uninformed or wrong. This is why I believe a journalist needs to learn everything there is know about public communication, borrowing and adapting from marketing experts and public health planners. Genuine honesty and humility seems to me the ethical core, and newsroom transparency is a critical check on this power.

Of course, decisions would have to be made about what are misconceptions and which of them are important enough to combat. Decisions have to be made already about what to cover and promote with limited resources, and these hard choices are the iceberg that sinks any hope of a truly “impartial” journalism. It’s a reality that the profession has to deal with every day, and I wish we would get on with the work of crafting and communicating our normative stance, rather than insisting that “objectivity” means we don’t have one. (Even Wikipedia explains its norms in great detail.) I’d like to start with a list of things that journalists wish were better known. Be honest. I know you’ve already thought about this.

But if we can get over that hurdle — if we can admit that journalism needs concrete goals — then we stand a chance of doing better journalism, and knowing when we’re doing it. For me, the insane possibility of new communications technology carries with it the obligation to do better than we ever have before.

UPDATE: As if on cue, a major study was released four days after I published this, showing that a majority of American voters were misinformed about the issues they voted on in the recent mid-term elections. I discuss what that means here.